Frazier is an assistant professor at the Crump College of Law at St. Thomas University. Starting this summer, he will serve as a Tarbell fellow.

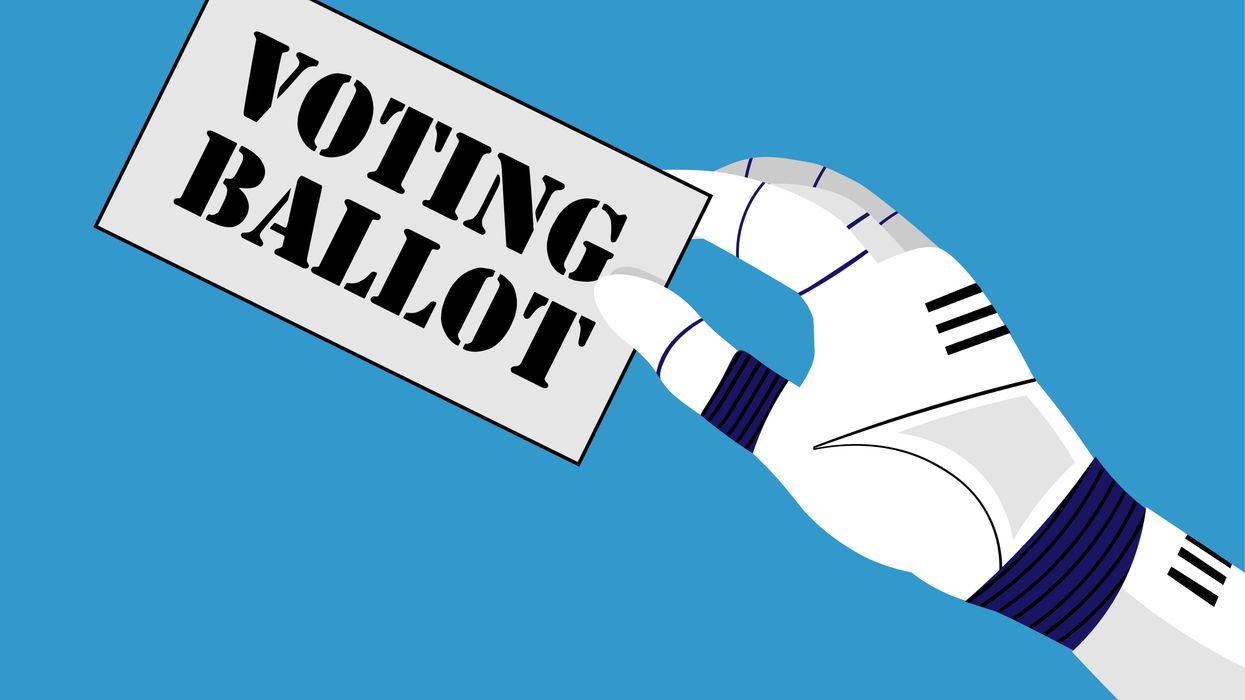

With the supposed goal of diversifying the electorate and achieving more representative results, State Y introduces “VoteGPT.” This artificial intelligence agent studies your social media profiles, your tax returns and your streaming accounts to develop a “CivicU.” This artificial clone would use that information to serve as your democratic proxy.

When an election rolls around, State Y grants you the option of having your CivicU fill in the ballot on your behalf — there’s no need to study the issues, learn about the candidates or even pick up a pencil. Surely CivicU will vote in your best interest. In fact, it may even vote “better” than you would, based on its objective consideration of which candidates and ballot measures would improve your well-being.

In the first election with CivicU, there’s nearly 90 percent “voter” turnout with AI agents casting about a third of all votes. Soon after Election Day, a losing candidate for a seat in the House of Representatives challenges the constitutionality of votes cast by CivicU.

A robust legal debate ensues. State Y points to its constitutional authority to decide the manner of elections and notes the absence of any federal law banning AI agents in an electoral context. What’s more, State Y reviews historical records that make clear that proxy voting — appointing someone to vote on your behalf — was a relatively common practice in colonial America. The candidate counters that surely the Founders could not have anticipated and would not have tolerated a vote cast without active involvement by the voter in question. They also note that proxy voting, while permissible in some countries like the United Kingdom, has not been adopted by the United States. The developers of VoteGPT file an amicus brief arguing that a voter’s CivicU is indistinguishable from the voter — they are one and the same, so this is more akin to someone Googling how they should vote than someone delegating their voting power.

Who wins and why?

This may seem like an implausible scenario, but the rapid development of AI as well as its use in electoral contexts suggests otherwise. In fact, current trends indicate that AI will only come to play a larger role in whether and how people participate in democracy. In short, it is a matter of when and not if certain partisan interests will leverage AI agents to bolster the odds of their electoral success.

Though proponents of AI agents might claim such efforts reflect democratic ideals such as a more representative electorate, excessive use of such agents (like allowing them to cast votes on behalf of users) may actually cause the opposite result — decreasing the legitimacy of our elections and sowing distrust in our institutions.

Before CivicU or something like it becomes a reality, we need to proactively clarify what limits exist in the Constitution with respect to agentic voting. My own interpretation is that the Constitution prohibits the tallying of any vote not explicitly cast by a human. Though such a finding may seem obvious to some, it is important to stress that a human must always be “in the loop” when it comes to formal democratic activities. The development of any alternative norm — i.e. allowing AI agents to serve as our proxies in government affairs — promises to undermine our democratic autonomy and stability.