Levine is the senior elections integrity fellow at the German Marshall Fund's Alliance for Securing Democracy, where he assesses vulnerabilities in electoral infrastructure, administration, and policies.

Savoia is a program assistant for the Alliance for Securing Democracy at GMF, where he serves as the lead author of ASD's weekly newsletter, the Securing Democracy Dispatch.

In the days leading up to Slovakia’s highly contested parliamentary election, deepfakes generated by artificial intelligence spread across social media. In one posted by the far-right Republika party, Progressive Slovakia leader Michal Šimečka apparently “ announced ” plans to raise the price of beer if elected. In a second, more worrisome fake audio recording, Šimečka “ discussed ” how his party will rig the election, including by buying votes from the country’s Roma minority.

Although Šimečka never said those words, it is unclear how many of the millions who heard the recordings across Facebook, TikTok, and Telegram knew that, although Slovak language fact-checkers did their best to debunk the clips.

While it is difficult to assess whether the deep fakes manipulated Slovak voters’ choices—and to what extent—it is clear that artificial intelligence is increasingly being used to target elections and could threaten future ones. To protect its elections, the United States must learn from Slovakia and bolster its ability to counter AI-generated disinformation threats before November 2024.

The threat of falsified information is not new for democracies, but artificial intelligence is likely to only compound these existing problems, particularly in the near-term. Authoritarian adversaries like Russia, China, and Iran will exploit different types of artificial intelligence to magnify their influence campaigns, as the Department of Homeland Security’s 2024 Homeland Threat Assessment recently warned. With this technology becoming widespread, a greater number of actors are able—and, in some cases, have already begun—to create falsified audio and video material with a potentially greater ability to mislead voters than textual disinformation.

In hyperpolarized societies like the United States, AI-generated disinformation may undermine voters’ ability to make informed judgments before elections. Deepfakes that purport to show corrupt dealings or election rigging behind closed doors—like those seen in Slovakia—could increase voter apathy and undermine faith in democracy, especially for a U.S. audience already awash in baseless claims of election fraud. Finally, different kinds of AI tools, such as chatbots and deepfake images, audio, and video could make it harder for U.S. voters to reject content designed to be manipulative, which could raise questions about the legitimacy of elections, especially those that are closely contested.

There has already been acknowledgment of risks from artificial intelligence in the United States. The U.S. Senate Rules Committee recently held a hearing on AI-related threats to elections and bills have been introduced in both chambers to address disclosures in political ads. The White House published the blueprint for an “AI Bill of Rights” to govern the technology’s development and use. On the state level, bills continue to be proposed and passed on the matter.

However, there is still much more that can be done before the 2024 presidential election to safeguard the vote. First, the U.S. Congress should mandate that large social media platforms like Facebook, X (formerly Twitter), and TikTok require labels on all AI-generated content and remove posts that fail to disclose this. Even if AI-generated content were to spread, users would be warned of its origin via a clear marking. Congress could learn from the European Union’s (EU) approach on this front. Earlier this year, the EU passed the Digital Services Act, a comprehensive regulation that mandates similar labels for deep fakes.

Second, political campaigns should pledge to label all AI-generated content in ads and other official communications. On the platform front, Google, and YouTube already require that ads using AI-generated voice and imagery be clearly labeled. Campaigns should also avoid using artificial intelligence to mimic a political opponent’s voice or likeness—as happened in Slovakia, but also in the United States, Poland, and elsewhere—as this portrays them as saying words or performing actions they did not actually say or do. In the longer term, the U.S. Congress should pass legislation requiring this sort of disclosure.

Lastly, journalists and newsrooms should develop clear guidelines on how to cover AI-generated content. This could be done, in part, by consulting with AI experts and building sources with people who audit AI systems, talking with academics who study the data, conversing with technologists who work at the companies who developed the tools, and meeting with regulators who see these tools through a different lens. Journalists could also try to look at the human data scooped up to train these models and the people who made choices to optimize them. Outlets should also seek to educate listeners about how to identify AI-generated content.

If Slovakia’s example is any indication, the United States and other democracies must take AI-generated disinformation seriously. An open information space is key to democracy, making it important to protect from this sort of willful manipulation.

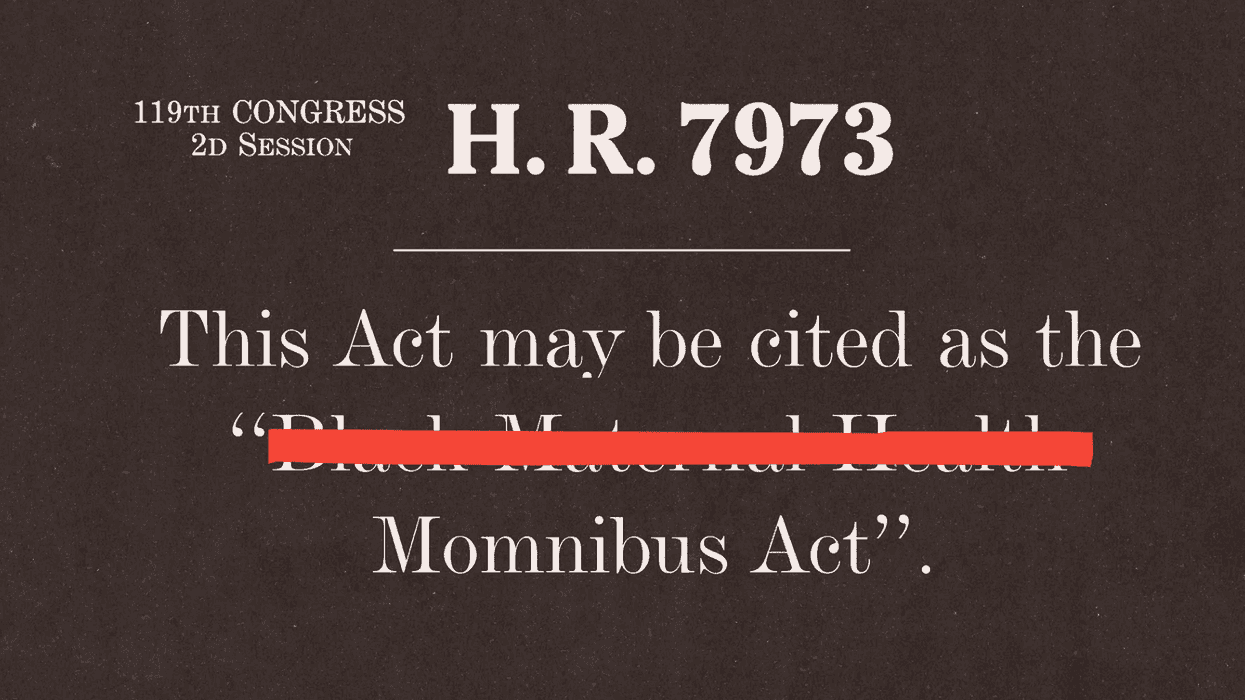

Rep. Lauren Underwood, a lead sponsor of the Momnibus package, said the title change reflects how people commonly refer to the legislation and emphasized that the bill continues to help Black women. (Samuel Corum/Getty Images)

Rep. Lauren Underwood, a lead sponsor of the Momnibus package, said the title change reflects how people commonly refer to the legislation and emphasized that the bill continues to help Black women. (Samuel Corum/Getty Images) At an April congressional hearing, Rep. Summer Lee questioned Health and Human Services Secretary Robert F. Kennedy Jr. about reports that organizations applying for federal dollars had been told to remove words including ‘Black’ from funding applications. (ALLISON BAILEY/NURPHOTO/AP)

At an April congressional hearing, Rep. Summer Lee questioned Health and Human Services Secretary Robert F. Kennedy Jr. about reports that organizations applying for federal dollars had been told to remove words including ‘Black’ from funding applications. (ALLISON BAILEY/NURPHOTO/AP)