Klug served in the House of Representatives from 1991 to 1999. He hosts the political podcast “ Lost in the Middle: America’s Political Orphans.”

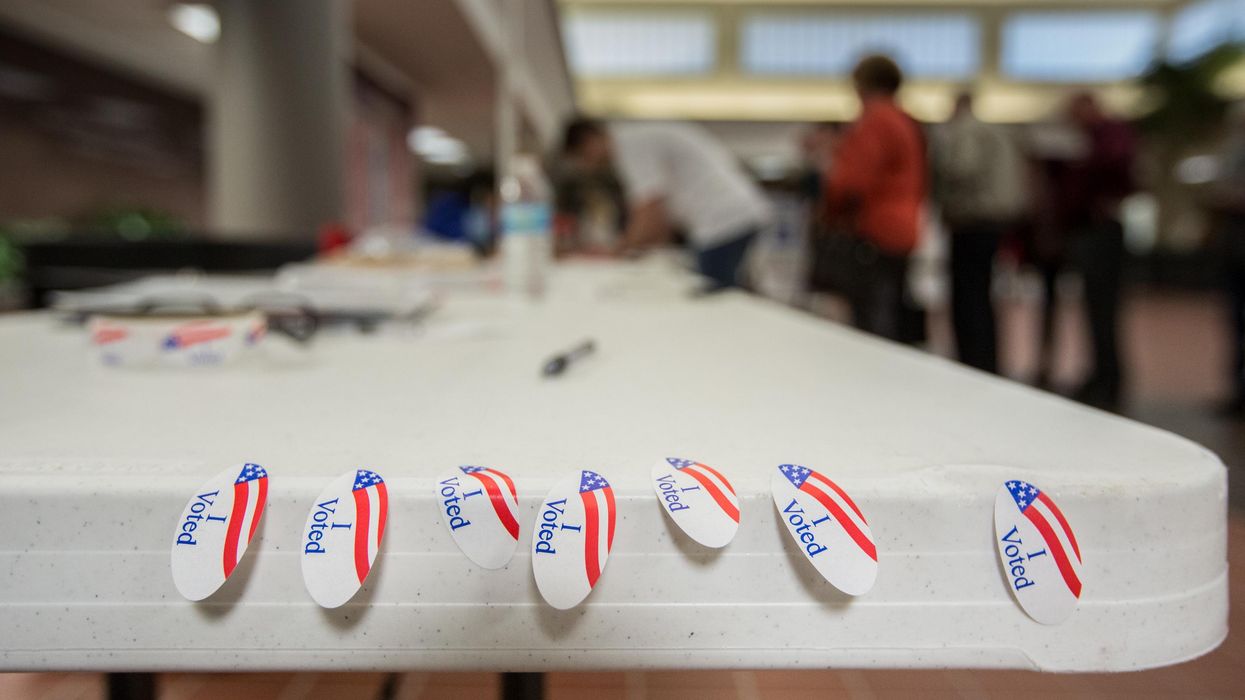

As we head into election season, the potential for misinformation is enormous and the ability of election officials to respond to artificial intelligence is limited.

The new technology arrives at a time when we still haven’t gotten our arms around social media threats.

“The ability to react at the pace that's being developed is almost impossible,” worries Idaho Secretary of State Phil McGrane, a Republican. “By design, our system is meant to be slow and methodical.”

While deep fakes get all the attention, the truth is the threat arrives at a time when election administration itself is shaky. Election officials caught the brunt of the mistrust. In some states more than half of them have quit.

“I have a little PTSD, as do my coworkers,” said Nick Lima, who heads up elections in Cranston, R.I., and who — with some reservations — decided to keep the job he loves. “During election season, you know, you really feel the pressure, you feel your heartbeat increasing a bit.”

Today everyone who works in the campaign infrastructure faces unending scrutiny. If you thought it was easier in a red state, you are mistaken.

“Just the act of standing behind you watching you work just puts you on edge,” said McGrane. “Now you start second-guessing yourself, even if you know you're doing it right. The poll workers don't know about cyber security on voting equipment, but your poll watcher is getting asked these questions. “

Deep fakes are one level of concern, but Edward Perez, who had been director of civic integrity at Twitter and is now a board member at the OSET Institute (whose mission is to re-build public confidence in our voting system), worries about the misuse of AI to disrupt the backroom of every American precinct.

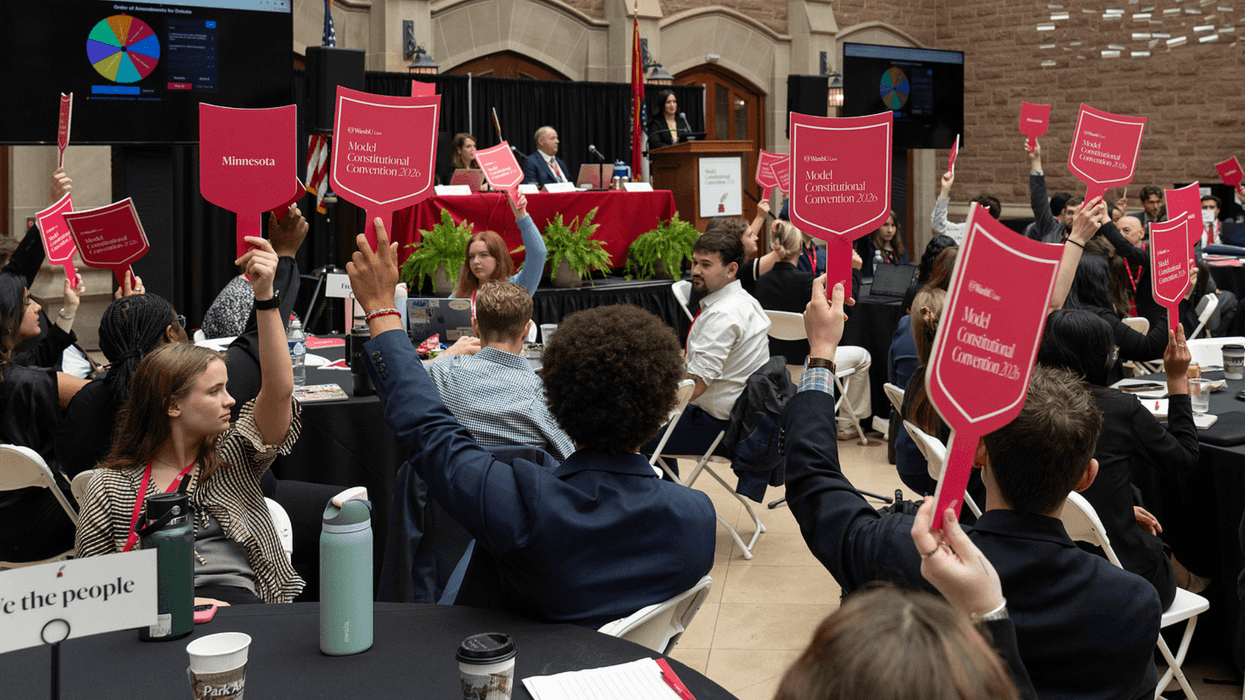

“One of the most important things to understand about election administration is, it’s very, very process oriented. And there’s a tremendous number of layers,” he said. “Are we talking about voter registration? About the security of election administration? All of this technology is never deployed just in a vacuum.”

The fact that the election system is a conglomeration of different rules and regulations from 50 different states with 50 different voting rules adds to the complexity. The challenge is serious as election officials scramble in this election-denying climate to staff 132,000 polling places with 775,000 volunteers. The clock is ticking to deploy the necessary defenses against threats that aren’t fully understood.

From hanging chads to deep fake videos, American democracy wobbles by Scott Klug

Read on Substack