Minichiello writes The Sift newsletter for educators at the News Literacy Project.

I see misinformation all the time. Scrolling through Instagram, I saw a musician I follow sharing false posts about the Israel-Hamas war. Out to eat at a restaurant, a server making friendly small talk shared true crime content she finds online — while rattling off names of accounts that I later discovered were conspiracy-minded. A friend of mine thinks the Infowars conspiracy theories site is a delight. And there’s my relative who started entertaining the idea that the world is flat after watching YouTube videos.

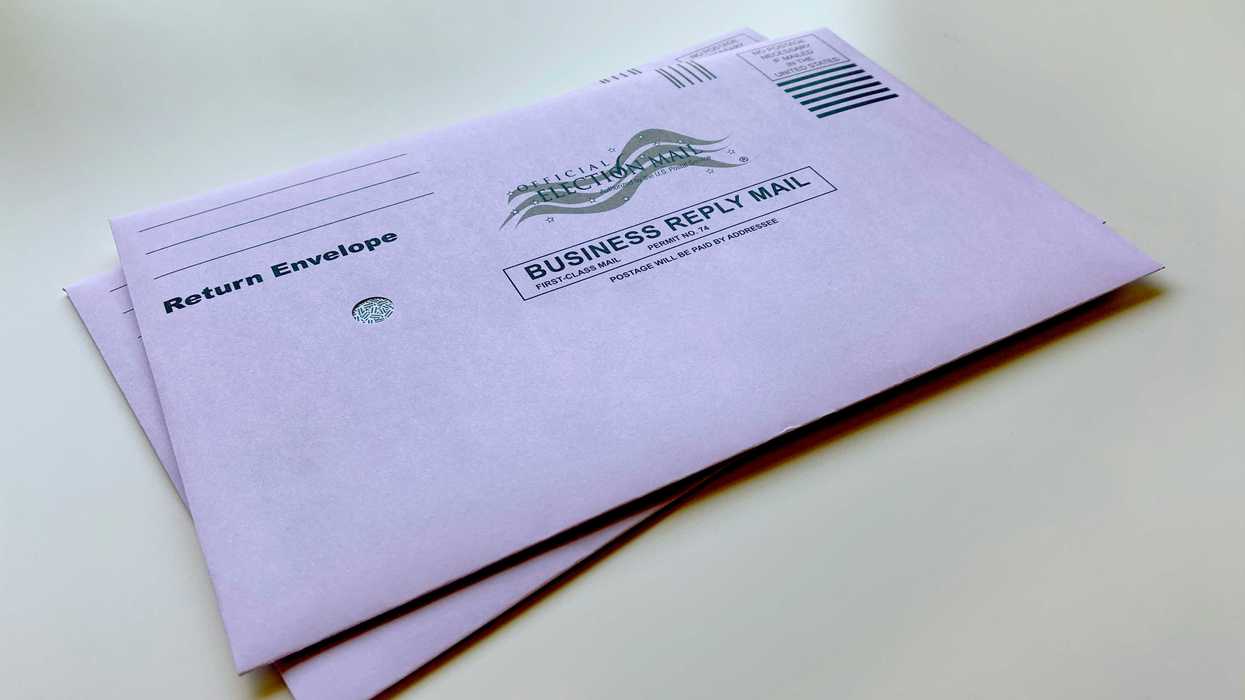

Misinformation affects everything from our health decisions to our personal relationships to business to, of course, democracy. It’s easy to get angry when we’re confronted with misinformation — that’s what it’s designed to do — but learning how to sort fact from fiction online while also practicing empathy will go a long way in fixing the misinformation crisis.

And the News Literacy Project, where I now work after a career in journalism, can teach people how to identify credible news.

First, if you’re fortunate enough not to live in a local news desert, you should get your information from your local newspaper, radio station or TV news programs. Beware of online “news” sites that are partisan propaganda. If they have very few or no local stories but plenty of politically charged articles, it’s a red flag. Also, follow news outlets that adhere to journalism standards and ethics, such as being transparent about corrections. Quality local news not only empowers us individually, but studies show it’s also good for democracy.

Second, when navigating difficult conversations where misinformation may come up, try practicing what NLP calls “PEP”: patience, empathy and persistence. You can’t convince people of anything in one heated conversation, but if you listen you can walk away with a better understanding of how they developed their beliefs.

“We don’t all think the same,” said one of my relatives, who refused to get a Covid-19 booster shot after seeing misinformation about how it might affect someone’s hair.

I responded: “I’m not saying we need to think the same thing. I’m saying we need to know what’s true before we agree or disagree.”

It’s hard when someone you love is repeating falsehoods, but that doesn’t mean they’re not intelligent or they are a bad person. We’re living in a system where social media platforms don’t properly moderate content for misinformation and, in fact, incentivize its spread through outrage. Laws and regulations haven’t caught up to how quickly artificial intelligence technologies — including misleading images, videos and posts — are being developed.

Additionally, remember that none of us are exempt from falling for misinformation. It’s embarrassing, but it’s happened to me too. During the early days of the pandemic, photos spread online of dolphins swimming in Venice canals. It seemed mesmerizing and magical. Of course, those photos later turned out to be false. And I felt like a fool – something to remember the next time I spar with a friend over misinformation.

It’s a systemic problem. We can do our part to counter it by seeking out credible sources of news. We can keep in mind that it’s easy to be fooled by falsehoods. And we can engage in tough, face-to-face conversations with the people we care about, but lead with empathy — not accusations.

One tactic that has helped me immensely — not just in countering misinformation I see online but also when talking through hot misinformation topics with loved ones — is to simply take ... a ... pause. This is actually a news literacy skill. Being news-literate means being able to identify credible information, which is often as simple as pausing to confirm whether something is true before sharing it online or in conversations.

It also means seeking out credible news sources like your local TV station or paper. By practicing news literacy skills, we’ll be better equipped to find trustworthy information and engage in difficult conversations about conspiracy theories and misinformation.

During National News Literacy Week, which runs Jan. 22-26, you can learn how to push back on misinformation and empower yourself and your communities to seek quality, vetted information. Join us in our effort to build a national movement to advance the practice of news literacy throughout American society, creating better informed, more engaged and more empowered individuals — and ultimately a stronger democracy.

Some MAGA loyalists have turned on Trump. Why the rest haven’t