In this episode of the Politics in Question podcast, the team discusses thermostatic politics to explain what it means and how it works.

Podcast: What is thermostatic politics?

In this episode of the Politics in Question podcast, the team discusses thermostatic politics to explain what it means and how it works.

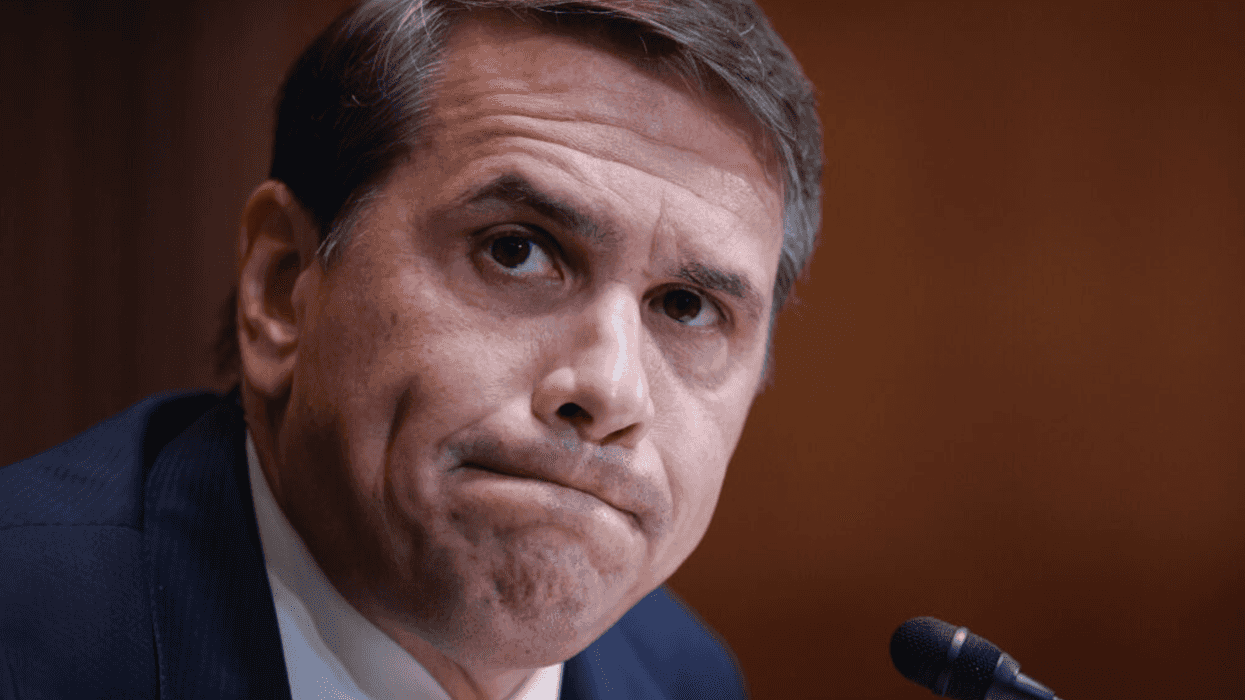

Acting U.S. Attorney General Todd Blanche testifies during a Senate Committee on Appropriations, Subcommittee on Commerce, Justice, Science, and Related Agencies hearing in the Dirksen Senate Office Building on Capitol Hill on May 19, 2026 in Washington, D.C. The hearing was held to examine the Department of Justice's proposed FY2027 budget estimate.

There was a time the Republican Party believed in policies and principles. Conservatives genuinely believed in democracy and America, and not the cynical new version that requires its citizens to hate each other. And they believed in a contest of ideas.

The concept of competing for the soul of the nation with intellectually rigorous ideas and admittedly populist rhetoric became foundational to American politics and in particular movement conservatism later on in that century.

In a speech at CPAC in 1985, President Ronald Reagan boasted of the right’s recent dominance as a result of that competition. “Now, we’re not in power now because [the left] failed to gain electoral support over the past 50 years. They did win support. And the result was chaos, weakness, and drift,” he said. “Ultimately, though…we are where we are because we’re winning the contest of ideas. In fact, in the past decade, all of a sudden, quietly, mysteriously, the Republican Party has become the party of ideas…. All of a sudden, Republicans were not defenders of the status quo but creators of the future.”

And it was this way for some time, when conservatives believed that if given the opportunity, they could win over the country on the power of their ideas.

But in the era of Donald Trump, Republicans have decided competing is no longer useful or necessary, and they’re out of good ideas.

In some ways, they’re not wrong. Polling for any number of Trump’s “ideas,” from the Iran war to tariffs to burying the Epstein files are wildly unpopular and Republicans would lose that competition if judged solely on their merits.

But rather than even bother persuading, they’ve opted for other less democratic and more cynical pursuits to win elections.

The latest is Trump’s $1.8 billion slush fund, which will award allies and Trump supporters “compensation” for what the Trump DOJ is calling weaponized lawfare against them.

That means, presumably, that Jan. 6 insurrectionists, some of whom committed crimes at the Capitol, including assaulting police officers, could apply for and win taxpayer dollars as a form of political reparation.

One way to view these payoffs is that they’re a blatant bribe to buy MAGA support at a time when Trump is unpopular and making unpopular decisions that are impacting his own voters.

The GOP redistricting orgy is another example of Republicans simply giving up on winning voters over. Trump and his cronies in Congress love to boast about how America is now the “hottest” country, thanks to him, but if that were the case, wouldn’t they just go out and sell that to voters before the midterms? Instead, they’ve spent millions carving out new districts all over the South. That’s hardly a reflection of a party that’s confident in their ideas and their message — rather, it’s a party admitting, we can’t win it unless we rig it.

Trump’s media fights are about more than just his fragile ego, too. They’re another tell. In trying to coerce new Trump-friendly mergers, to get detractors fired from Fox and elsewhere, to sue media outlets for critical coverage, Trump isn’t just telegraphing his deep insecurities, he’s tacitly admitting he can’t win with a free and fair press.

Trump, the consummate salesman, isn’t even bothering to sell voters on his economy or his war anymore. He’s just lying about both, and hoping loyalty to him will be enough. But just in case it isn’t, Republicans are doing every craven thing they can to tip the scales and go around the voters.

It’s sad to watch how far the party of Reagan has fallen, but today’s Republican Party has unequivocally waved the white flag in the contest of ideas, and they’re just hoping we’re all too stupid to notice they’re no longer even asking for our vote.

GOP Waves White Flag in Contest of Ideas was originally published by The Tribune Content Agency and is republished with permission.

U.S. President Donald Trump (L) speaks to White House Chief of Staff Susie Wiles as he oversees "Operation Epic Fury" at Mar-a-Lago on February 28, 2026, in Palm Beach, Florida.

Why has Donald Trump transformed his foreign policy from isolationist to interventionist?

He doesn’t have some newfound curiosity in foreign affairs. Nor does he now deeply care about the global order. He’s shifted his focus for a different reason entirely: because his domestic agenda keeps getting stymied by checks and balances.

Unlike at home, Trump has few restraints on the global stage. He likes that environment much more.

He’s a bull who needs his China shop.

America’s domestic political system is resisting Trump more than many think. Sure, he’s gotten things done and caused lots of trouble. And to the victims of his abuses, the restraints on his power are far too weak.

But Trump’s domestic agenda has been muddled and underwhelming, especially for a president whose party controls Congress.

This is true even when the noise is screeching. Take DOGE, Trump’s partnership with Elon Musk. Remember how it was supposed to dramatically reduce federal spending?

For all its sound and fury, DOGE was largely negated by the very president who authorized it. About Trump's 2025 spending bill, Musk asked: “What’s the point of DOGE if the government’s just going to add $5 trillion more in debt?”

Moreover, while many Republicans slavishly acquiesce to Trump, not all of them do. Republican senators are rejecting his demand to end the filibuster. House Republicans are investigating the Epstein files.

The courts, for their part, rule against Trump regularly, including striking down his signature tariffs policy. His effort to undo birthright citizenship will likely soon meet the same fate.

State and local governments are likewise resisting Trump. Just look at California’s and Virginia’s broad gerrymanders in response to his (and his fellow Republicans’) election machinations.

The media–traditional and independent alike–have been fierce, notwithstanding Trump’s threats (and lawsuits). And the people in the streets, like in Minnesota, have made their voices heard.

Polling data suggests that Democrats will take the House in November. If they do, Trump will likely be impeached (again), and his legislative agenda will be in tatters.

Indeed, Trump has worthy opponents across American society thwarting his domestic ambitions.

Every single day.

But foreign policy, alas, is different. These checks don’t work internationally. A simple comparison illustrates the point: The same president who can’t get his political rival, Adam Schiff, indicted can unleash the might of America’s military on a whim.

Trump can largely do what he wants globally–including blowing up suspicious ships, taking out heads of state, invading sovereign nations, and threatening to end a civilization on social media.

It’s not supposed to work this way. The United States Constitution is ambiguous in numerous respects. But with declaring war, it’s clear: Article 1, Section 8 grants to Congress, not the president, “The power to declare war.”

“Only Congress can declare war,” Republican Senator Rand Paul said in March while protesting the Iran invasion. “That’s not my opinion. That's Article 1 of the Constitution … History will not be kind to a Congress that gave away its most solemn responsibility.”

As Trump's supporters emphasize, multiple presidents have initiated hostilities without congressional support. But numerous others have gone to Congress, as George W. Bush did in 2002 before the Iraq war.

One virtue of working with Congress before declaring war is that the public debate is more robust. Today, the American people are left to guess why Trump invaded Iran.

When he did so, without first seeking congressional authorization, he violated Article 1, Section 8 of the Constitution.

It’s that simple. Yet there was no practical way to stop him.

Congress moves far too slowly to block an aggressive commander-in-chief. And the courts can only do so much. Judges can force Trump to refund his tariffs, for example, but they can’t unwind the invasion of another country.

Ultimately, the person in the Oval Office is what matters most. As John Adams put it long ago, “We have no government armed with power capable of contending with human passions unbridled by morality … [which] would break the strongest cords of our Constitution as a whale goes through a net.”

Trump’s global ambitions flow from his domestic frustrations. The way to restrain him should be to follow the Constitution. That is, unfortunately, like trying to catch a whale with a fishing net.

Edward Larson is a Pulitzer Prize-winning legal historian, and William Cooper is an attorney and author. They cohost the podcast How Our Constitution Works And Why It Doesn't.

President Donald Trump at the White House on Oct. 14, 2025, in Washington, D.C.

To understand the current state of the American executive, one must look past the daily headlines and toward a deeper, more structural transformation. We are witnessing a presidency that has moved beyond the traditional "team of rivals" or even the "team of loyalists." Instead, the second Trump administration has become an exercise in "liquid governance," where the formal structures of the state are being hollowed out in favor of a highly personalized, informal power center.

The numbers alone are staggering. So far, the revolving door of the Cabinet has claimed high-profile figures with a frequency that would destabilize a mid-sized corporation, let alone a global superpower. The removal of Attorney General Pam Bondi, the exit of Homeland Security Secretary Kristi Noem, and the recent resignation of Labor Secretary Lori Chavez-DeRemer represent more than just standard political turnover. They signal a fundamental rejection of the idea that a Cabinet secretary is an institution's steward. In this White House, a Cabinet post is a temporary lease, subject to immediate termination if the occupant’s personal loyalty or public performance deviates even slightly from the president’s internal barometer.

The volatility does not end with the pink slip. The recent civil contempt resolution filed by House Oversight Democrats against Bondi for defying subpoenas related to the Epstein investigation is a vivid illustration of the "liquid" model: an official is discarded the moment their utility expires, leaving the individual to navigate the institutional wreckage alone, while the administration simply flows toward the next loyalist.

The most consequential shift is occurring within the national security apparatus. Secretary of War Pete Hegseth has moved with startling speed to reorganize the military hierarchy. By removing the Army’s top officer and the head of the Navy during a period of active friction in the Middle East, Hegseth is executing a mandate to "de-bureaucratize" the Pentagon. But the cost of this purge is the systematic removal of institutional memory. When you replace seasoned commanders with those whose primary qualification is ideological alignment, you make the military more brittle.

This focus on internal purging is particularly alarming given the current geopolitical climate. As the administration continues its high-stakes involvement in the Iran conflict, the lack of stable leadership at the top of the military branches creates a vacuum. In that vacuum, strategy is replaced by impulse.

The irony of the current moment is that as the official Cabinet becomes more volatile, the real power has consolidated in a "Shadow Cabinet" of unconfirmed advisors. Jared Kushner and Steve Witkoff now operate as the primary envoys for America’s most sensitive diplomatic portfolios. From negotiating nuclear red lines with Tehran to managing the complex endgame in Ukraine, these two individuals—neither of whom holds a Senate-confirmed position—are the true architects of U.S. foreign policy.

This arrangement creates a dangerous disconnect. While the official Secretary of State or Secretary of War handles the administrative affairs of their departments, the real deals are made in private by men whose primary bond to the president is personal or commercial. This is a return to a pre-modern form of governance, one in which familial ties and personal trust outweigh professional expertise and public accountability.

The standard critique is that the president simply selects incompetent people. But this misses the point. The individuals being removed—like Bondi or Noem—were not outsiders; they were loyalists. Their failure to survive suggests that the problem is not a lack of competence, but a lack of clarity in what the job actually entails. If the job of a Cabinet secretary is to act as a decorative placeholder for a policy that is actually being run out of a private suite at Mar-a-Lago, then independent judgment, by definition, is seen as a form of resistance.

The result is a talent drain. The "best people" the president frequently cites are increasingly unwilling to serve in an environment where the professional risks are high and the actual authority is low. This leaves the administration with a narrowing circle of candidates: the true believers, the opportunists, and the relatives.

The world is watching this administrative volatility with growing unease. For decades, the stability of the American executive was the "anchor tenant" of global order. Allies and adversaries alike could rely on a certain degree of continuity in the State Department or the Pentagon. That continuity is now gone.

When a government is in a state of permanent reshuffling, it loses the ability to project long-term intent. Foreign capitals are no longer calling the State Department to understand American policy; they are trying to figure out who is currently "in" or "out" of the inner circle. This unpredictability might serve a real estate developer in a tactical negotiation, but it is a disastrous way to run a global superpower.

The tragedy of the second term is not that the president is changing his team; it is that he is effectively dismantling the idea of a "team" altogether. We are left with a government of one, assisted by an informal circle of associates, presiding over a bureaucracy that is increasingly paralyzed by its own instability. In the long run, the greatest threat to American power may not be a rising China or a belligerent Iran, but the steady erosion of the very institutions that were built to project and protect that power.

Imran Khalid is a physician, geostrategic analyst, and freelance writer.

King Charles III and U.S. President Donald Trump attend a state arrival ceremony on the South Lawn of the White House on April 28, 2026 in Washington, DC.

Last month, the King of England came to Congress and schooled us on what it means to be American. This would be hysterical if it wasn't so tragic.

To understand why, you need to understand two things happening inside our government right now.

The first is the unitary executive theory -- the idea that the president has sole, total control over every agency, every employee, every decision in the executive branch. Not leadership. Control. For most of our history this was fringe. Congress created independent agencies -- the Federal Reserve, the FDA, the National Weather Service -- precisely so expert, nonpartisan work could be insulated from whoever happened to be in office. Madison called the concentration of all powers in one set of hands "the very definition of tyranny."

But in February 2025, Trump signed an executive order declaring all federal agencies "must be supervised and controlled" by the president. Project 2025 laid the blueprint. Then came the purge: FTC commissioners fired, a Federal Reserve governor targeted, USAID dissolved, inspectors general removed, thousands of civil servants stripped of protections.What does this look like in your life? The National Weather Service lost roughly 600 people. Then on July 4, the Guadalupe River rose 26 feet in 45 minutes and more than 130 people died across central Texas, including 27 campers and counselors at Camp Mystic. The administration's 2026 budget proposes eliminating the NOAA lab that developed key flash flood prediction tools. At the NIH, about 2,300 grants totaling $3.8 billion were terminated, affecting at least 383 clinical trials. The FDA lost nearly 4,000 employees. Foreign food inspections hit historic lows.

The second thing is a strain of nationalism, championed by Israeli political theorist Yoram Hazony, that argues a nation isn't built on ideas like "all men are created equal" but on tribal bonds -- shared blood, language, religion, ancestry. Hazony's conferences feature regular speakers like JD Vance, Marco Rubio and Sen. Josh Hawley. This philosophy has entered the White House.

You can hear it when Trump calls immigrants people "poisoning the blood of our country." You can see it in ICE's transformation: at-large arrests up 600%, nearly 70,000 people in detention, two U.S. citizens shot dead by federal agents.

I know many of us have been told -- by the administration, by the news, by people we trust -- that immigrants are driving crime. I understand why that's frightening. But the data doesn't support it. Immigrants, including undocumented immigrants, commit crimes at lower rates than native-born citizens. The administration's own records confirm that the majority of people arrested in these operations have no criminal record. Because throughout history, when leaders need the public to accept an extraordinary expansion of power, they first have to make people afraid enough to let them.

Which brings me to this week. Trump welcomed King Charles to the White House and spoke of settlers who "bore in their souls the blood and noble spirit of the British," of founders whose "veins ran with Anglo-Saxon courage." He rejected the idea that America is "merely an idea."

King Charles told a different story. He called Congress "this citadel of democracy created to represent the voice of all American people." He said the founders "drew strength in diversity." He cited the Magna Carta -- the charter that established no one, not even a king, is above the law. He urged America to "ignore the clarion calls to become ever more inward-looking."

He was not being polite. He was sounding an alarm.

Then, apparently without irony, the White House posted a photo of the two men with the caption: "TWO KINGS."

This country was founded because we didn't want kings. The unitary executive seizes the power. The nationalism decides who it's used against. A real king came here and reminded Congress what makes nations strong. Our president stood in the same building and spoke of bloodlines and genetic inheritance.

I know which vision I recognize as American.

Sara Sharpe LaMance of Chattanooga is a writer, communication strategist and the founder of The Letters Project and STILL/WILD.

Some MAGA loyalists have turned on Trump. Why the rest haven’t