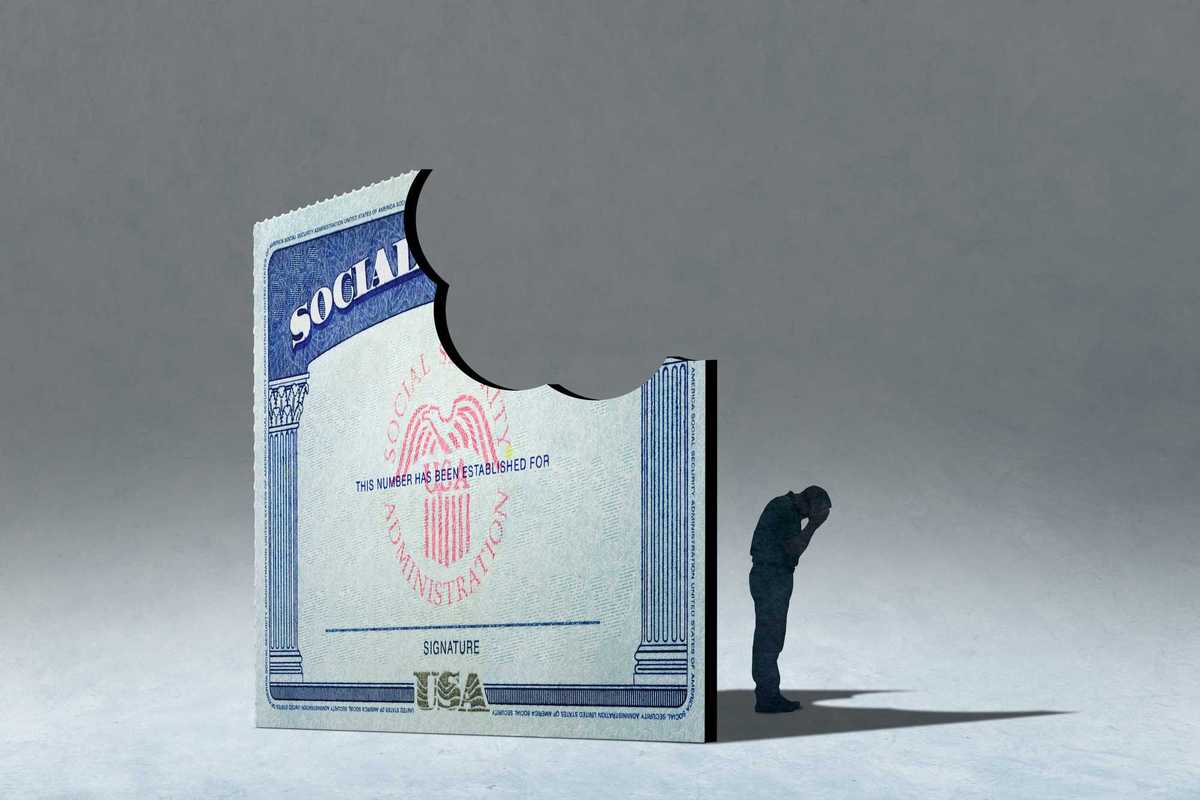

In 2018, Economist Raj Chetty and his colleagues revealed a sobering truth: talent is everywhere, but opportunity is not. Their research on "Lost Einsteins" demonstrated that countless young Americans with the potential to be great inventors never get the chance to develop their skills simply because they lack exposure to innovation and mentorship. The data was clear: if a child grows up in an area with a high concentration of inventors, they are far more likely to become one themselves. But for too many, particularly those in rural and lower-income communities, the door to innovation remains closed. Failure to find those “Lost Einsteins” has deprived us all of a better future. Chetty forecasted that "if women, minorities, and children from low-income families were to invent at the same rate as white men from high-income (top 20%) families, the rate of innovation in America would quadruple." That’s a more prosperous, dynamic America.

The introduction of artificial intelligence (AI) carries the promise of realizing that brighter future if we learn from our prior mistakes. A lack of broad exposure among our youth to AI and the individuals shaping its development threatens to leave behind an entire generation of would-be entrepreneurs, scholars, and thought leaders. We risk creating "Lost Sams"—referring to OpenAI's Sam Altman as a stand-in for AI innovators—and "Missing Fei-Feis"—a nod to Stanford AI researcher Fei-Fei Li. Without urgent action, we will reinforce the existing gaps in AI leadership, limiting who gets to shape the future of this transformative technology.

To bridge this divide, we need a grassroots solution: AI Guides. These guides should be trusted figures within their communities—teachers, local entrepreneurs, librarians, and civic leaders—who are trained to introduce AI concepts in an accessible and engaging way. They must not only be knowledgeable about AI but also be representative of the communities they serve. Chetty’s research indicated that exposure to innovation had a larger impact on students when it involved a young person learning from someone who shared their background. If AI remains a field dominated by those from elite institutions and urban tech hubs, we will continue to miss out on the perspectives and innovations that could emerge from diverse backgrounds.

AI Guides can serve as the crucial link between communities and the AI revolution. They can run after-school programs, host workshops in community centers, and partner with local businesses to demonstrate real-world AI applications. By working with startups, big tech companies, and public universities, these programs can provide hands-on exposure to AI tools and career paths that young people might never have considered.

Why Local Action is Critical

Waiting for federal action on this front is a losing bet. While Washington debates AI regulations and corporate leaders focus on AI’s role in competition and security, communities must take the initiative. The disparities in AI adoption are stark. According to a study from the MIT Sloan School of Management, AI adoption is concentrated in high-income, urban areas, leaving rural and economically disadvantaged communities far behind. Similarly, research from Deloitte shows that trust plays a significant role in AI adoption, particularly among women. Building that trust in new technology requires learning opportunities in comfortable, familiar settings, not just top-down initiatives.

Consider the success of community-driven AI initiatives abroad. Estonia, for example, has launched an ambitious program to integrate AI education into high schools, ensuring students graduate with foundational AI literacy. The United States, in contrast, has no national strategy for ensuring AI exposure for young people beyond select STEM programs that often fail to reach the most underserved populations.

But this is not just an education issue—it’s an economic one. Studies from Harvard Business School show that women are already avoiding AI tools in the workplace, which could have long-term career consequences. Meanwhile, research from the National CIO Review highlights that younger generations are more likely to adopt AI, but that doesn’t mean they are receiving structured guidance on how to use it effectively. If we don’t intervene now, we risk deepening existing inequities in AI participation and leadership.

What AI Guide Programs Could Look Like

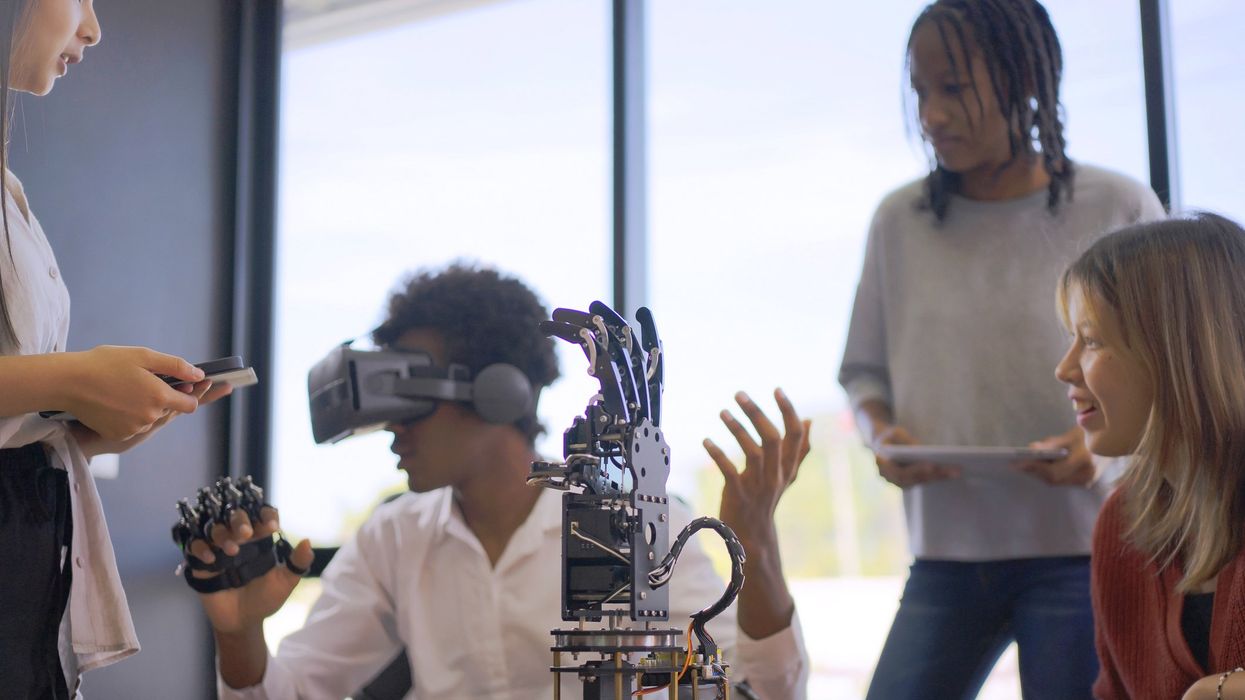

The AI Guide model can be easily adapted to different community needs. A few possibilities include:

- Elementary School AI Clubs: Volunteers from local startups or university AI programs could run interactive sessions where kids learn about AI through games and simple coding exercises.

- Community Center AI Nights: Public libraries or town halls could host monthly AI workshops, focusing on topics like how AI affects job markets, healthcare, and daily life.

- Mentorship Pairing with AI Professionals: High school students interested in AI could be paired with mentors who work in AI-related fields, whether in big tech, academia, or startups.

- AI for Small Businesses: Local entrepreneurs could be trained on how AI tools can help them streamline operations, from customer service chatbots to marketing automation.

These programs don’t require massive federal investment. They require committed local leaders, support from tech companies willing to provide training materials, and partnerships with public universities.

A Call to Action

We have the talent. What we need is exposure. AI Guides could be the key to ensuring that the next generation of AI innovators doesn’t come solely from Silicon Valley but from every corner of the country. Every town has the potential to produce its own AI pioneers but only if we provide young people with the mentorship and opportunities they need.

If we fail to act, we will look back in twenty years and see yet another generation of “Lost Sams” and “Missing Fei-Feis.” But if we rise to the challenge, we can ensure that AI’s future is shaped by a truly diverse and representative group of thinkers, creators, and leaders. It’s time to build the bridges that will connect every young mind to the possibilities AI has to offer.