Let’s be honest: does driving feel safe anymore? Ask anyone navigating the daily commute, especially in notoriously chaotic places like Miami, and you’ll likely hear a frustrated, perhaps even expletive-laden, "No!" That gut feeling isn't paranoia; it's backed by grim statistics. Over 200 people died on Travis County roads in 2023, according to Vision Zero ATX. Nationally, tens of thousands perish in preventable crashes. It's a relentless public health crisis we've somehow numbed ourselves to, with a staggering cost measured in shattered families and lost potential.

But imagine a different reality, one where that daily fear evaporates. What if I told you that the technology to dramatically reduce this carnage isn't science fiction but sitting right under our noses? Autonomous vehicles (AVs), or self-driving cars, are here and rapidly improving. Leveraging breakthroughs in AI, these vehicles are increasingly outperforming human drivers, proving to be significantly less likely to cause accidents, especially those resulting in injury. Studies suggest that replacing human drivers with AVs could drastically cut road fatalities. Even achieving just 10% AV penetration on our roads might improve traffic safety by as much as 50%, with those gains likely to grow exponentially as the technology becomes more sophisticated and widespread.

The benefits extend far beyond preventing crashes. Many AVs are electric or designed for fuel efficiency, promising cleaner air. They can reduce frustrating traffic congestion by communicating and coordinating movement. Perhaps most profoundly, AVs offer the potential for unprecedented mobility and freedom for millions—the elderly who can no longer drive safely, people with disabilities who face transportation barriers, or even just reclaiming hours lost to stressful commutes.

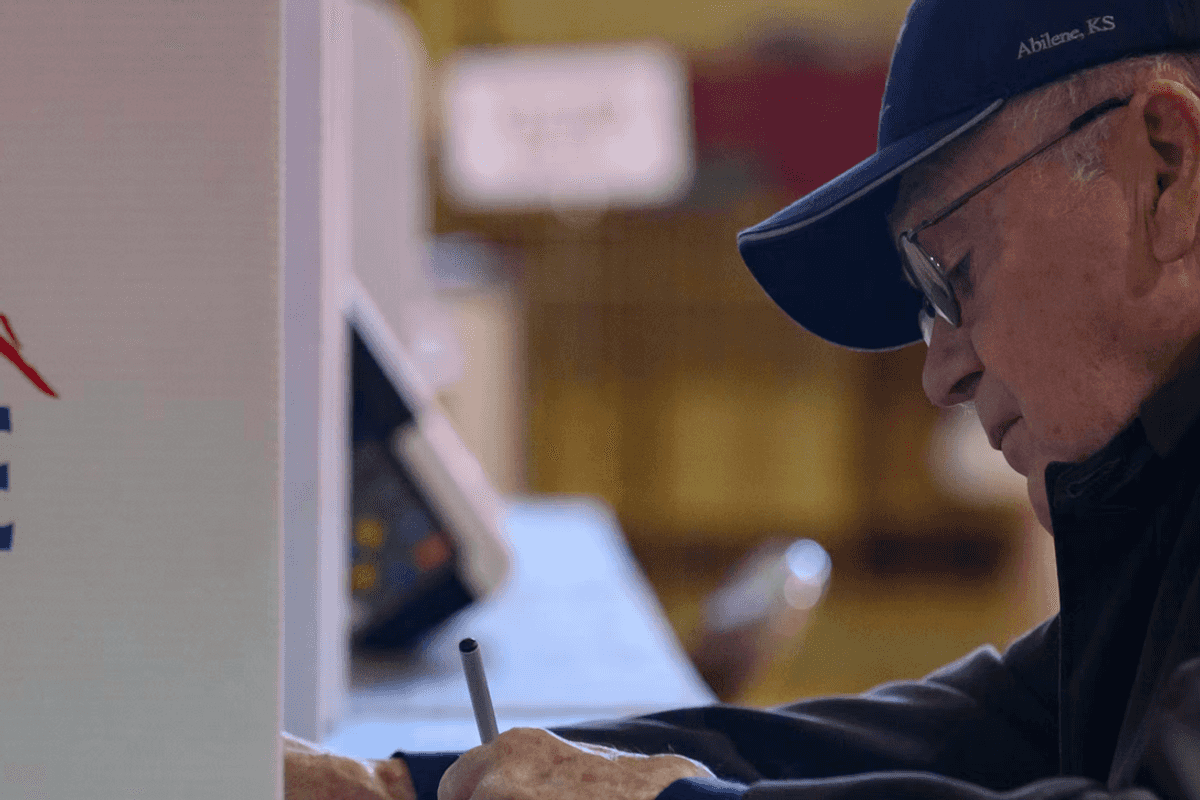

Given this potential, you'd think we'd be rolling out the red carpet for AVs. Private companies are certainly betting big, pouring billions into research and development. Fleets of robotaxis are already operating, albeit cautiously, in cities across the country such as Austin, Miami, and San Francisco. Yet, the transformative leap—widespread adoption that truly moves the needle on national safety statistics—remains frustratingly out of reach. Why the delay?

Ironically, the biggest roadblocks aren't primarily technological anymore. They are political, regulatory, and societal. We currently face a chaotic mess of differing state and local AV regulations—a regulatory traffic jam that makes large-scale deployment a nightmare. How can a car designed to cross state lines operate effectively if the definition of "driver" or the rules for operation change every few hundred miles? This regulatory uncertainty chills investment and forces companies into limited, geographically constrained testing, which slows down the learning process that is essential for improving AV safety and reliability across all driving conditions. Add to this a healthy dose of public skepticism that is often fueled by unfamiliarity and fear that is amplified by a media focus on glitches rather than the millions of safely driven miles.

This is precisely where government leadership becomes critical. And I argue it's not just a good idea; it's a constitutional obligation. The federal government has a fundamental duty, rooted in the Constitution itself, to actively promote technologies that significantly advance public safety and well-being.

This duty isn't theoretical; it's embedded in the very DNA of our nation. The Constitution's Preamble explicitly states a core purpose to "promote the general Welfare". This wasn't just hopeful rhetoric. The Founders drafted the Constitution because the previous government under the Articles of Confederation was demonstrably ineffective—unable to manage national defense, economic stability, or even internal order. They intentionally created a stronger federal government capable of tackling big, collective problems for the common good. This implies what some scholars call a "right to effective government"—a right to expect our government to use its powers competently to protect us and improve our lives, especially when individual or market actions fall short.

Protecting citizens from widespread, preventable harm like mass traffic fatalities falls squarely within this duty. We've seen the government fulfill this role before. Remember the fight over seat belts? Initially appearing in the 1930s, they faced decades of resistance from manufacturers arguing that "safety didn't sell" and from public pushback against the mandates. It took years of advocacy and eventual government action—federal standards pushing states to enact laws—to make seat belts ubiquitous. The delay undoubtedly cost countless lives. Today, nearly 375,000 lives have been saved since 1975 thanks to those belts.

Federal inaction on AVs today risks repeating that tragic history, sacrificing safety on the altar of regulatory timidity. The National Highway Traffic Safety Administration (NHTSA) has the explicit authority to set Federal Motor Vehicle Safety Standards (FMVSS) to protect the public from "unreasonable risk". It’s time they used that authority to create clear, uniform, national standards for AVs, providing the roadmap that the automotive industry needs.

Now, let's acknowledge the real concerns surrounding AVs. Data privacy is paramount—cars packed with sensors could collect vast amounts of personal information. We need robust regulations to ensure this data isn't misused or exploited. The transition will inevitably impact jobs, particularly in trucking and transportation, requiring proactive policies for worker retraining and support. Ensuring equitable deployment is vital, so AV benefits can reach rural communities and lower-income individuals, not just affluent city dwellers. Environmental impacts also need careful management to ensure that AVs lead to a net reduction in emissions. These are serious challenges requiring thoughtful, proactive policy responses that are developed *alongside* AV deployment, not as barriers to it. They do not, however, outweigh the moral imperative to prevent tens of thousands of deaths each year.

We face a clear choice. We can continue down the current path, accepting the horrific and largely preventable toll of human driving errors as well as allowing fragmented regulations and unfounded fears to stall progress indefinitely. Or, we can embrace the promise of AV technology and demand our government fulfill its most basic constitutional obligation: to act effectively to safeguard our lives and promote general welfare. This requires decisive federal leadership now—setting clear national standards, facilitating safe and widespread testing to build public trust, and creating policies that manage the transition responsibly. The technology to save these lives is within reach. It’s time our policies caught up.

This is a summary of "A Constitutional Mandate to Adopt AVs," originally published in the Washington and Lee Law Review Online.

Kevin Frazier is an AI Innovation and Law Fellow at Texas Law and Author of the Appleseed AI substack.