News Ambassadors is working to narrow the partisan divide through a collaborative journalism project to help American communities that hold different political views better understand each other, while giving student reporters a valuable learning experience in the creation of solutions reporting.

A program of the Bridge Alliance Education Fund, News Ambassadors is directed by Shia Levitt, a longtime public radio journalist who has reported for NPR, Marketplace and other outlets. Levitt has also taught radio reporting and audio storytelling at Brooklyn College in New York and at Mills College in Oakland, Calif., as well as for WNYC’s Radio Rookies program and other organizations.

TODAYS FEATURED STUDENT REPORT

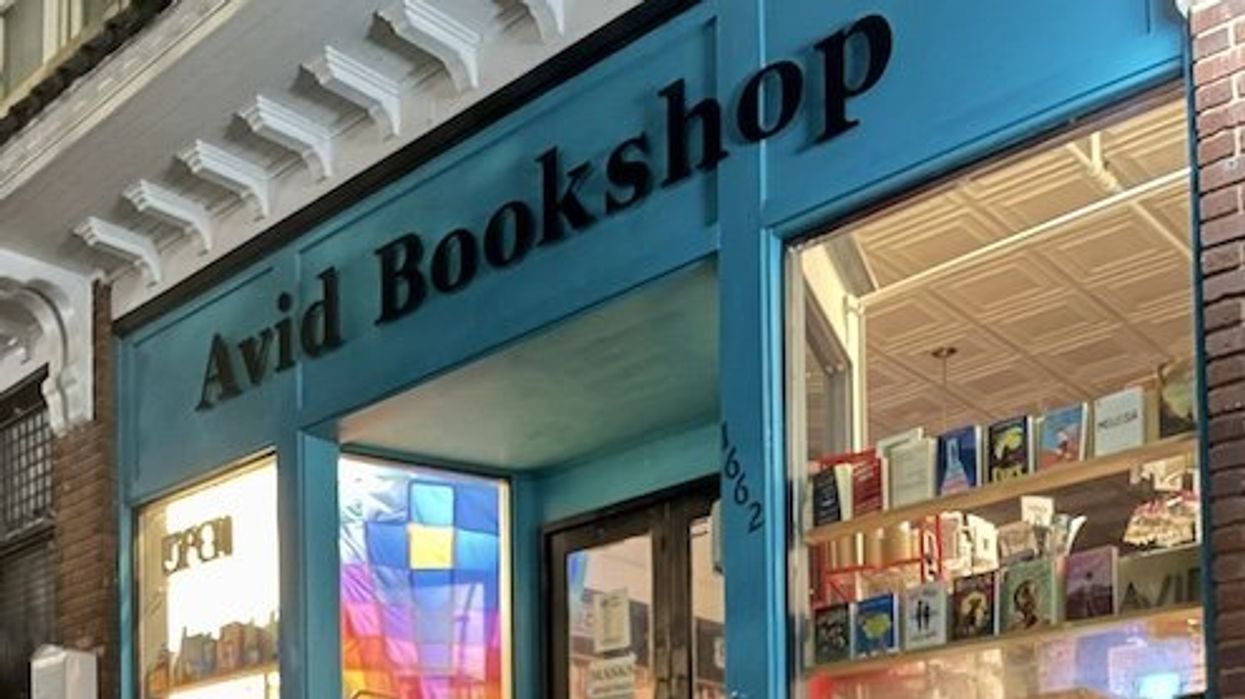

Radio station partner WUGA, recently worked with students at the Grady College of Journalism and Mass Communication and the Solutions Journalism Hub of the South, to cover efforts advancing around the country to remove books from the shelves of libraries and schools, and the state of Georgia is no exception. Chloe Beaver, a Grady College senior majoring in journalism and business administration, covered the community of Athens, GA., as part of Charlotte Varnum’s podcasting class.

In her radio piece, she explores how one Athens bookstore is responding to what critics call book censorship.

Enjoy Beaver’s Audio story report.

******************************************

H OW NEWS AMBASSADORS WORKS:

News Ambassadors enlists journalism students to help local newsrooms fill gaps in coverage of underreported communities by fostering collaborations between journalism schools and local radio stations to create community-responsive reporting that reflects local concerns and priorities. In the spring, News Ambassadors introduced students to two key reporting strategies:

Solutions journalism: rigorous, evidence-based reporting on how people have responded to existing problems.

Complicating the narratives, a conflict-mediation–informed framework designed to help journalists improve their reporting on polarizing issues.

Student participants report stories informed by one or both of these approaches as well as by local community priorities surfaced during preparatory community engagement work. The best stories are shared with local newsrooms for vetting and potential broadcast.

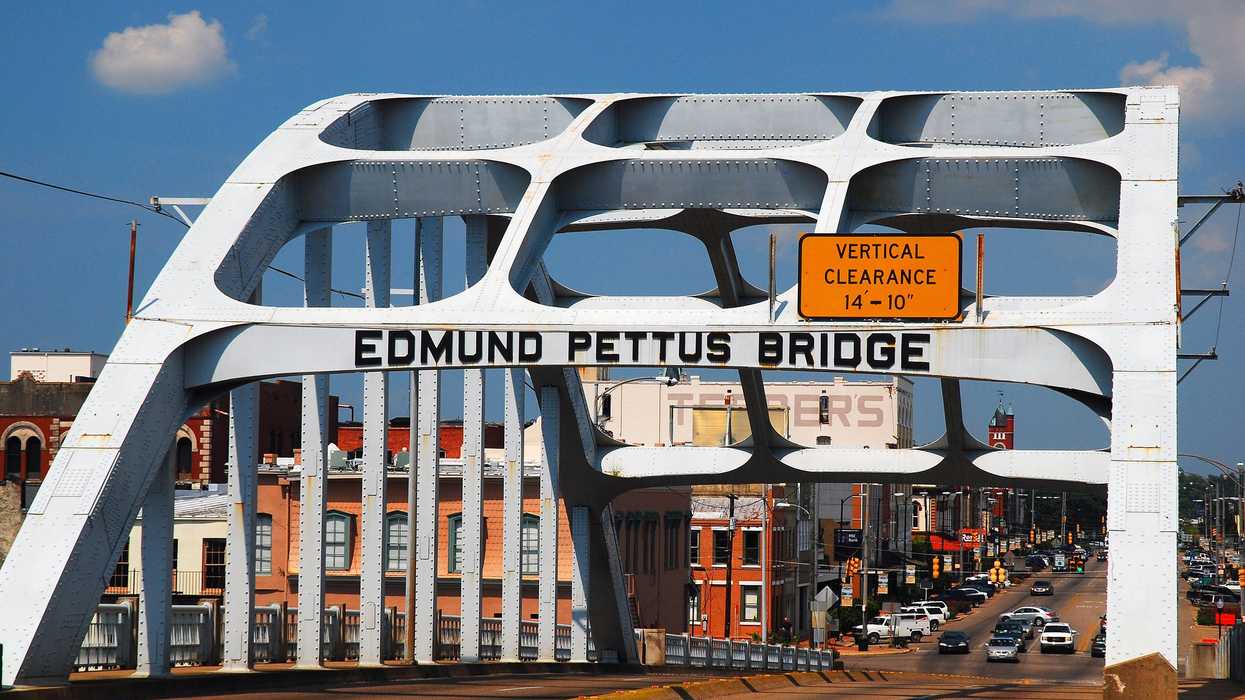

This collaborative project helps young reporters better understand the perspectives of people outside the bubbles where they live and helps American communities that hold different political views better understand each other. Throughout the process, the project links journalism students to counterparts in politically or demographically dissimilar areas to collaborate on stories exploring solutions to contentious issues.