15-year-old Owen Cooper made history to become the youngest male to win an Emmy Award. In the Netflix series Adolescence, Owen plays the role of a 13-year-old schoolboy who is arrested after the murder of a girl in his school. As we follow the events leading up to the crime, the award-winning series forces us to confront legitimate insecurities that many teenage boys face, from lack of physical prowess to emotional disconnection from their fathers. It also exposes how easily young men, seeking comfort in their computers, can be pulled into online spaces that normalize misogyny and rage; a pipeline enabled by a failure of tech policy.

At the center of this danger lies the manosphere: a global network of influencers whose words can radicalize young men and channel their frustrations into violence. But this is more than a social crisis affecting some young men. It is a growing threat to the democratic values of equality and tolerance that keep us all safe.

Like the crime series, we have already seen where this can lead in real life. Recently, French authorities charged a teenager connected to the incel subculture with terrorism, marking the country’s first such case of gender-based violence, a case built on the young man’s conspiracy to harm women. This should not be seen as an isolated incident, but as a symptom of a broader ideological problem.

While toxic for the young men it ensnares, the manosphere's deeper danger lies in its corrosion of the foundational values of democracy. These male-dominated online spaces reinforce rigid, traditional masculine norms. On the surface, we may see young men searching for identity and belonging, but beneath churns a murky undercurrent of pseudoscience and a contempt for gender equality.

Misogynistic messages poison the minds of young men, weaponizing their frustrations. They offer a seductively simple answer to complex feelings of loneliness: blame women. In 2014, Elliot Rodger fatally stabbed three people before going on a shooting spree to kill three more and injure 14 others. In his manifesto, he wrote, “All of my suffering in this world has been at the hands of humanity, particularly women,” blaming even his childhood crush for his violent hatred of women.

This blame can then be refined into a hatred for the very institutions, such as education, government, and the media, that are pillars of a functioning civil society, breeding a generation that rejects the pluralistic ideals necessary for democracy to thrive. Take, for example, the rise of far-right and neo-Nazi groups in Sweden that are attracting a new generation of young men who have lost faith in democracy. They share racist memes and violent videos to attract potential recruits, including boys as young as 10, on mainstream platforms like TikTok, before moving to more private, less regulated spaces.

The manosphere is a pathway to violence where online hate is turned into real-world violence. The French case is a direct example, but the trail of blood stretches back to attacks in Toronto, Isla Vista, and beyond. These digital communities don’t just vent; they strategize. They provide twisted justification and promote a culture of martyrdom that glorifies retribution. The leap from dehumanizing rhetoric on a forum to physical acts of terror is shorter than we imagine.

The manosphere is not neutral and can serve as a pipeline to extremist ideologies. While its entry point is often resentment of women, its logic inevitably expands. The same frameworks of hatred used against women are easily applied to minorities, migrants, and LGBTQ+ individuals. The Center on Extremism (COE) explains that the fear that women’s equality undermines men’s status is just a step away from seeing all demands for equality as threats to white male dominance. This exposes the symbiosis between misogyny and white supremacy, both connected by a deep-seated loathing of women.

And this matters because the status of women has long been a litmus test for the health of democracy. A recent study by the Georgetown Institute for Women, Peace, and Security found that women’s equality is strongly correlated with election integrity, freedom of association and assembly, and checks on executive power. In other words, when women’s rights erode, so too do the foundations of democratic governance.

Some argue that we might be overestimating the influence of the manosphere, as many of its users selectively choose what resonates with them and disregard what doesn’t. Indeed, not every frustrated young man online is a criminal. Not all criticism of society is extremist. Yet history shows that even small, seemingly fringe groups can eventually reshape society in harmful, authoritarian, or destructive ways. When Adolf Hitler joined the German Workers’ Party, which later became the Nazi Party, he was the 55th member. We do not have to wait for a movement to gain a large following to see the potential trajectory it may take.

Ignoring the manosphere is a luxury we cannot afford. Combating it requires a proactive, multi-sectoral effort that must include a robust tech policy framework. Tech companies must move beyond reactive content moderation and proactively redesign their algorithms to de-amplify hateful content, rather than recommending it. They must consistently enforce their own terms of service against organized hate and misogyny, subjecting their enforcement to independent audits for transparency. Furthermore, they should invest in redirect initiatives that algorithmically offer resources for mental health and positive mentorship to users searching for harmful keywords. Governments must move beyond a hands-off approach. They should legislate mandatory safety-by-design standards for platforms, compelling them to conduct and publish risk assessments on how platforms might facilitate radicalization. Legislators must also strengthen laws that hold platforms accountable for knowingly profiting from the algorithmic amplification of extremist content.

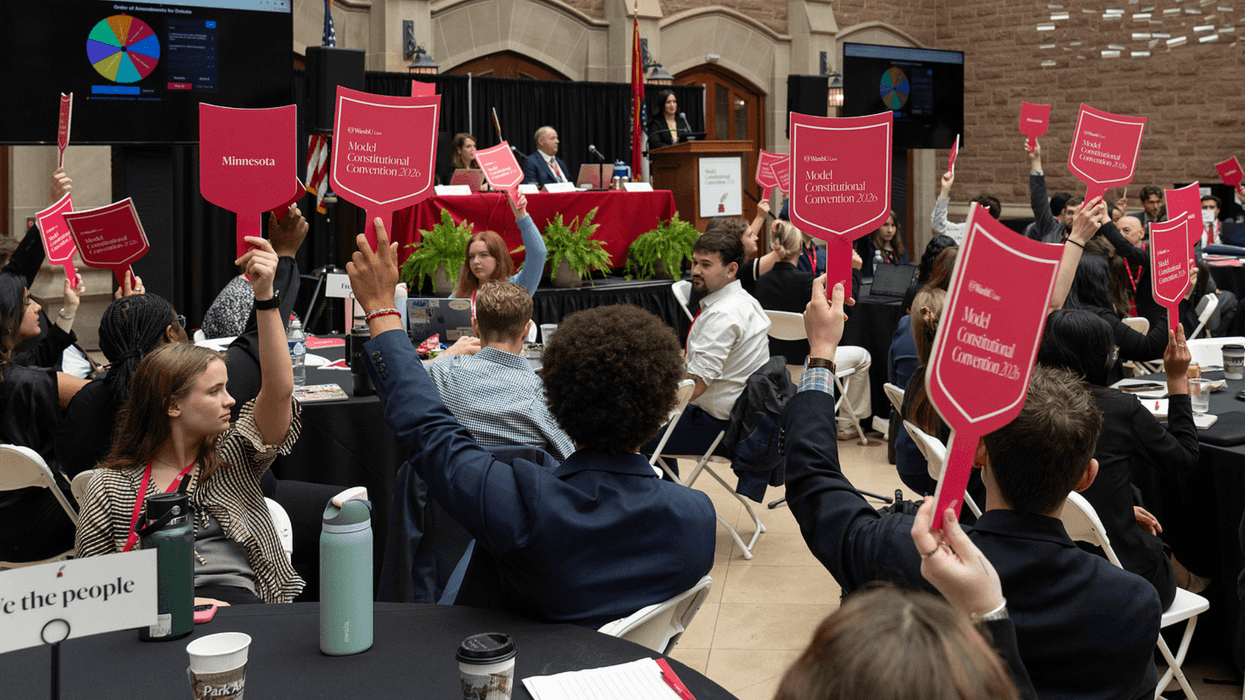

This must be coupled with integrating digital literacy into education to build resilience against manipulation and, most importantly, offering young men positive models of manhood built on respect rather than hatred and blame. Our collective safety and the future of our democratic values may just depend on it.

Kevin Liverpool is a Public Voices Fellow on Prevention of Child Sexual Abuse with The OpEd Project. He works as a partnerships specialist with No Means No Worldwide, an international nonprofit on a mission to end sexual violence against women and children globally.