The first time I watched the video of George Floyd’s final moments as he gasped for air, recorded on a smartphone for the world to witness, it was May 2020, and it was gut-wrenching to see a man’s life end in such a horrific way with just a click.

That single video, captured by a bystander, spread across over 1.3 billion screens and sent a shockwave throughout the country. It forced people to confront the brutality of racial injustice in a way that could not be ignored, filtered, or explained away.

That moment revealed the dual power of smartphones: they can expose hidden violence and spark collective action, but they also place trauma directly into the hands of those who do not have the tools to navigate it specifically: children. In California, lawmakers recently passed AB 56, which requires social media platforms to place warning language concerning usage on platforms.

The recent video of an ICE agent slamming a mother to the ground in New York’s federal immigration court in front of her children after her husband was taken away is another potent example of such trauma.

This begs an urgent question: are we allowing technology to deteriorate the mental health of innocent children by exposing them to violence without censorship or support?

Over the years, the brutality streamed across devices has only grown more frequent and more graphic. Phones made us witnesses, but without space to process the horror, many scrolled on, while carrying unresolved shock inside from the violence just viewed.

Analysis of hundreds of studies by the American Academy of Child & Adolescent Psychiatry shows disturbing results in addition to trauma, including that children “accept violence as a way to solve problems,” and also imitate what they witness.

The George Floyd video showed so many that digital visibility can change history, but it also revealed how unprepared everyone’s minds and bodies are for the relentless exposure to human suffering on our screens.

Children and youth, whose minds are still developing, are absorbing images and videos they are not equipped to process. They are learning to see cruelty and death as everyday content, rather than extraordinary tragedies that demand compassion and response.

When the brain compartmentalizes suffering as ordinary content, it diminishes the natural empathic responses. This constant exposure is not only altering their emotional development but also shaping the kind of society all will endure in the future.

Children exposed to disturbing imagery online may experience symptoms similar to post-traumatic stress disorder (PTSD), including intrusive memories, avoidance, and emotional numbing.

In the bestselling book, The Body Keeps the Score, Dr. Bessel vanderKolk emphasizes that trauma is not only about direct victimization, but witnessing violence can also embed itself in the nervous system.

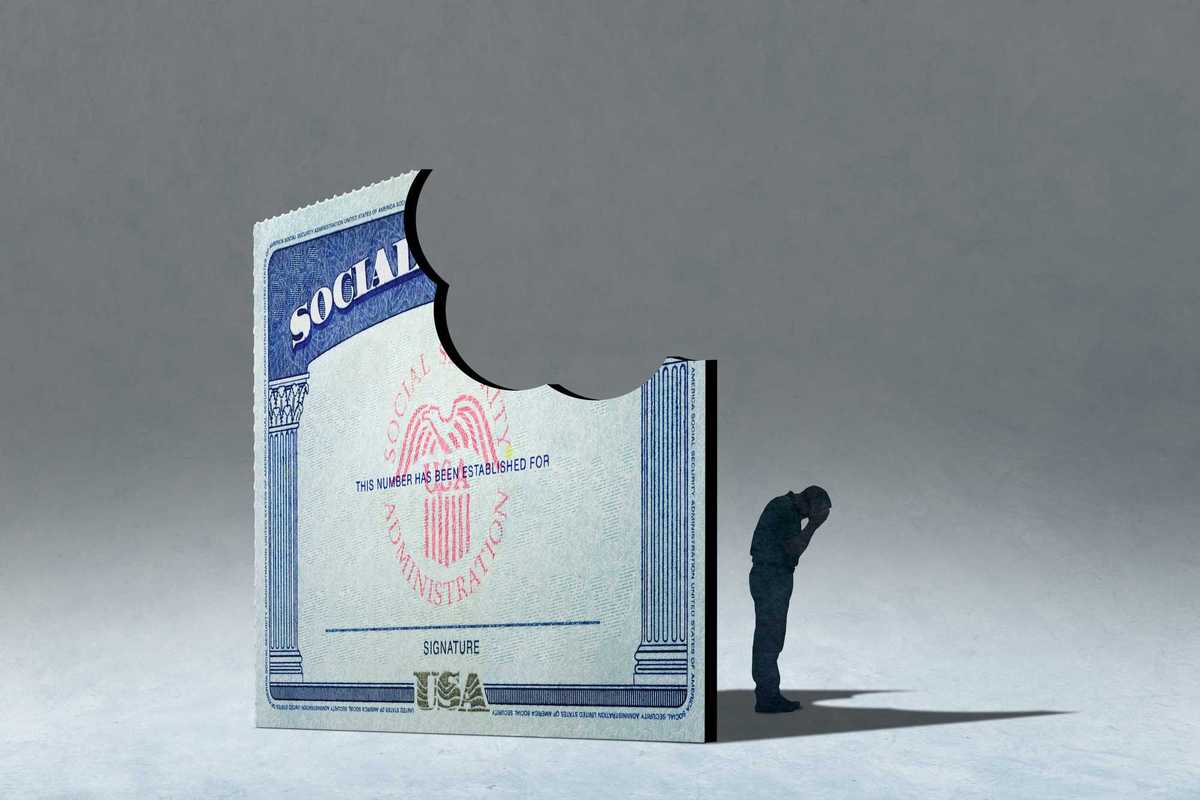

In an era where half of children in the U.S. carry smartphones capable of streaming wars, shootings, and violent acts in real time, society faces an unprecedented question of what happens when developing brains are repeatedly exposed to graphic violence.

A new study of more than two million people in 163 studies shows that children under 13 with smartphones have poorer mental health, including suicidal thoughts.

Unlike past generations, today’s youth encounter violence not only through television or news but in algorithm-driven feeds, often without warning or consideration of the ages that are being exposed.

Research in neuroscience and psychology suggests that this constant exposure carries deep implications for brain development, emotional regulation, and social empathy.

A study of adolescents exposed to graphic images of terrorism and war online found significant associations with sleep disturbances, anxiety, and depressive symptoms. This suggests that smartphones act as gateways for secondary trauma, where children bear the psychological burden of global violence without direct involvement.

When violence is presented casually, without context or resolution, children internalize a dangerous message: that cruelty is normal and suffering is inevitable. As someone who has worked with victims of abuse and violence, the children always stood out to me the most.

Meeting with a mother who had fled domestic violence, and working out a safety plan, her eight-year-old son asked me, “What’s the point, if we’re all going to die anyway?”

That innocent child had already absorbed the hopelessness of a world that seemed indifferent to violence. Now imagine children across the country encountering streams of shootings, war crimes, and killings not just in their homes, but in their pockets before they’ve even had a chance to understand what death means.

This is a silent epidemic: a generation desensitized to violence, carrying invisible wounds that will shape their adulthood.

Dismissing this as “that’s just the internet” is not helpful.

Tech companies and content providers must acknowledge their platforms as public health actors. Age-based restrictions must be enforced with rigor, not half-measures.

Algorithms need to be transparent and accountable. No child should stumble upon livestreamed killings while searching for music or dance trends. Policymakers must also step in to protect children.

Just as tobacco and alcohol—and much more recently vaping—were regulated to protect children’s health, violent digital content must be treated as a matter of public health and safety concern.

Parents and educators need to create a safe space for conversations that help children process what they see, reminding them that cruelty is not normal, that compassion is possible, and that healing is real. Resources such as a Critical Approach can help guide those conversations.

The unforgettable George Floyd video proved that visibility can drive justice, but it also exposed how unprepared everyone’s minds and bodies are for relentless exposure to violence.

The challenge is no longer just what children are seeing; it is what repeated exposure is doing to who they are becoming. If we fail to act, we risk raising a generation for whom violence is entertainment, cruelty is normalized, and empathy is a myth. We owe our children a better future.

Asha Wasuge is a professional policy advocate for the unhoused population and a Public Voices Fellow in Domestic Violence and Economic Security with The OpEd Project.