Madelyn Sanfilippo is an assistant professor in the School of Information Sciences at the University of Illinois Urbana-Champaign and book series editor for Cambridge Studies on Governing Knowledge Commons. She is a Public Voices Fellow of The OpEd Project.

I often see online advertisements recruiting me to participate in research studies for Sanfilippo Syndrome. Fortunately, I don’t have this rare genetic condition, it’s simply my last name. Just as often, I see ads for college degree programs and toys.

As a professor who studies privacy and technology policy, these ads make sense; systems know things about me, even if imperfectly. I’m not looking to go back to school, but I am a parent who buys lots of toys.

While many of us shrug at personalized ads — maybe even buying one of the many products or services tailored to our wants and needs — the truth is that targeted advertising is unnerving and inappropriate.

It is, after all, the result of surveillance capitalism, or very simply: a system that has every financial incentive to capture, save, and use as much data about us as possible at all times. People are data and data are a commodity. If this is true, we’re not just the users of technology, we’re also the used. Our identities are bought and sold, while we pay for that privilege.

And it’s about to get worse.

As generative artificial intelligence enters this system, we can and should expect to see more —more manipulation, more prediction (which can be both more unnerving and less accurate), and more biased ads.

Imagine ads promoting sales that are targeted to us based on race, or a vague guess what our race or ethnicity is based on stereotypes. Would it be OK for someone to pay more or less for the same items or services based on their race?

Think of the most manipulative ads you’ve ever seen, creating a false sense of urgency or promising incentives, but really including hidden costs. What if every advertisement you ever saw did this? We cannot simply accept this.

Broadly, practices of targeting and prediction around advertising are not new, but the recent evolution of generative AI and its rapid incorporation into advertising — in the past weeks, Google and Meta have rolled out products toward this end — pose new and real challenges.

Generative AI advertisements have the potential to scale even further, target more directly, and to adapt in real-time to increase clicks or purchases based on our behavior, context, and attributes.

Of course, many will see this as an exciting opportunity or the natural extension of the modern digital economy. However, this speaks to profits at the expense of the average person and is a major issue for consumer protection, or, as perhaps we ought to see it, protection of people.

We cannot wait to see the harm unfold and act in a panicked response to the inevitable discriminatory ads or dark patterns that will emerge, as they have from less sophisticated attempts to capture our attention and wallets.

As individuals, we can monitor our ad preferences on many platforms. Google, YouTube, Facebook, Instagram, X, and TikTok, all offer some user control or transparency — but this is not enough.

As a society, we need oversight to address these issues at scale. We need legislation like the bipartisan Digital Consumer Protection Commission Act, introduced by U.S. Sens. Lindsey Graham, R-S.C., and Elizabeth Warren, D-Mass.

Existing consumer protection infrastructure is overcommitted and scant on the necessary technical expertise to evaluate concerns and new uses of AI, like advertising. This proposal will create a new federal agency, bringing together experts who can address privacy concerns, deceptive pricing, and bias due to AI and data brokers in advertising — without putting the burden on us to protect ourselves.

People are not merely users. People are not merely consumers. We are citizens. We are parents and children and sisters and brothers. We are friends. By reducing us to users and consumers, we are less real. If we are data in this discussion, dehumanized and abstracted, it almost becomes a problem of math, which tends to both make people less interested and more accepting of the objectivity of the practices.

When privacy comes up in popular culture, it often focuses on social media alone or perhaps a tradeoff with security in discussion of government surveillance. It’s rarely about advertising, but it needs to be.

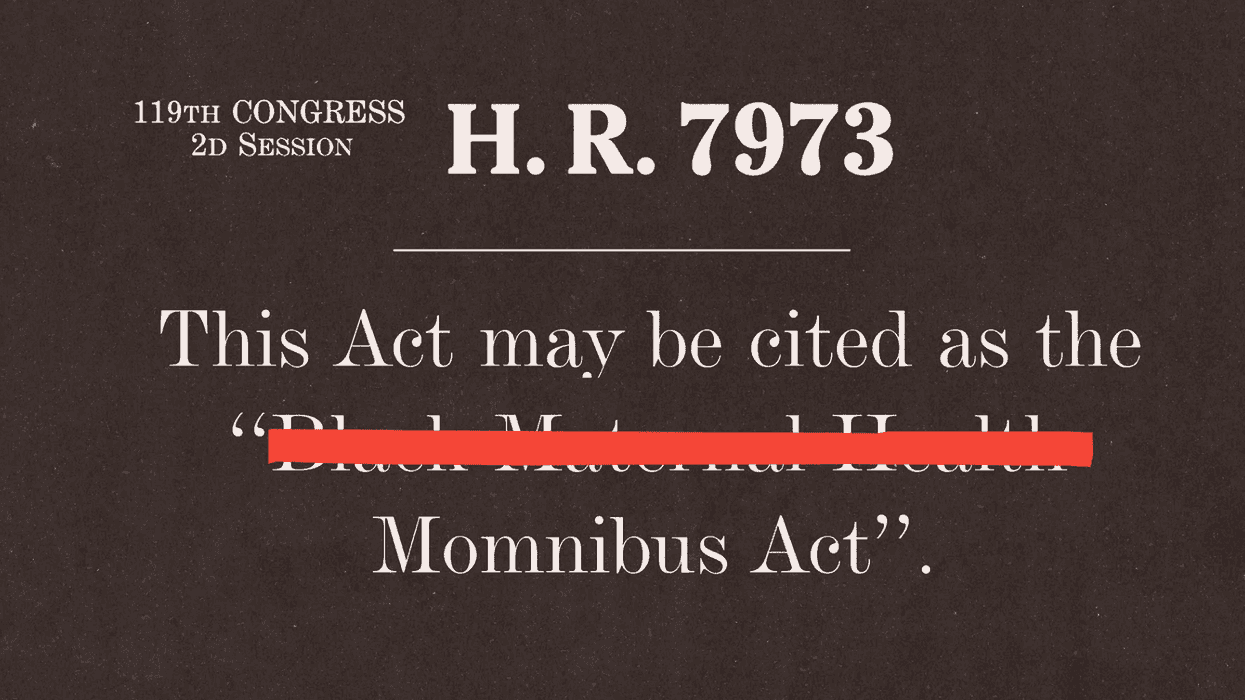

Rep. Lauren Underwood, a lead sponsor of the Momnibus package, said the title change reflects how people commonly refer to the legislation and emphasized that the bill continues to help Black women. (Samuel Corum/Getty Images)

Rep. Lauren Underwood, a lead sponsor of the Momnibus package, said the title change reflects how people commonly refer to the legislation and emphasized that the bill continues to help Black women. (Samuel Corum/Getty Images) At an April congressional hearing, Rep. Summer Lee questioned Health and Human Services Secretary Robert F. Kennedy Jr. about reports that organizations applying for federal dollars had been told to remove words including ‘Black’ from funding applications. (ALLISON BAILEY/NURPHOTO/AP)

At an April congressional hearing, Rep. Summer Lee questioned Health and Human Services Secretary Robert F. Kennedy Jr. about reports that organizations applying for federal dollars had been told to remove words including ‘Black’ from funding applications. (ALLISON BAILEY/NURPHOTO/AP)