Artificial intelligence (AI) is rapidly disrupting America’s job market. Within the next decade, positions such as administrative assistants, cashiers, postal clerks, and data entry workers could be fully automated. Although the World Economic Forum expects a net increase of 78 million jobs, significant policy efforts will be required to support millions of displaced workers. The Trump administration should craft a comprehensive plan to tackle AI-driven job losses and ensure a fair transition for all.

As AI is expected to reshape nearly 40% of workers’ skills over the next five years, investing in workforce development is crucial. To be proactive, the administration should establish partnerships to provide subsidized retraining programs in high-demand fields like cybersecurity, healthcare, and renewable energy. Providing tax incentives for companies that implement in-house reskilling initiatives could further accelerate this transition.

To ensure inclusivity, community technology centers and libraries equipped with online courses could be deployed in rural and underserved areas, helping workers across the country adapt to the evolving economy.

AI disproportionately affects regions reliant on clerical and manufacturing jobs, exacerbating local economic hardships. Establishing “economic diversification zones” in these communities—offering tax breaks, grants, and infrastructure investments—would attract growth-oriented industries such as advanced manufacturing, green energy, and technology startups, fostering broader economic resilience.

Rural areas, however, face a bigger challenge: they are among the least served by technology infrastructure, including high-speed internet. This digital divide limits access to the tools and resources necessary to participate in emerging AI-driven industries, putting these communities at risk of being left further behind. Many of these areas form the backbone of the Trump administration’s voter base, making their inclusion in the AI economy both an economic imperative and a political necessity. Without targeted investments to bridge this gap, rural regions may miss out on the opportunities AI could bring, compounding existing economic disparities.

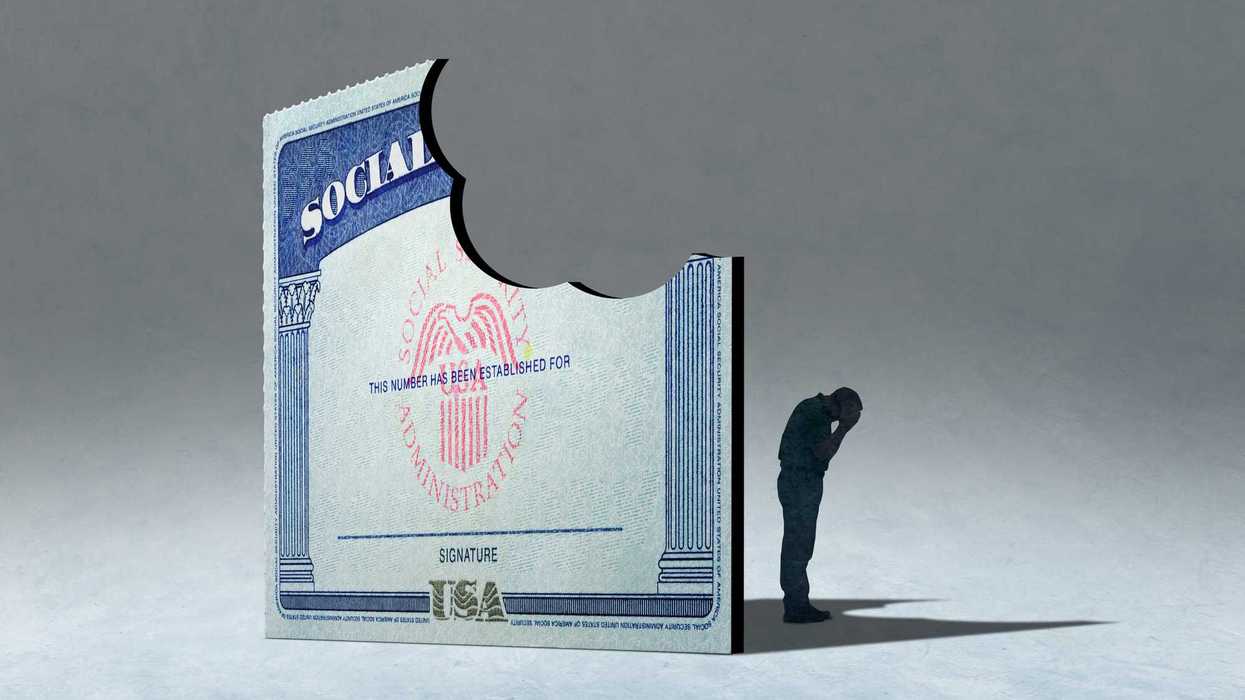

Displaced workers often face unemployment and financial instability. Expanding benefits to include income-based retraining and extending coverage duration would offer essential relief. Decoupling healthcare from employment could also reduce stress and uncertainty. Meanwhile, portable benefits—allowing retirement and healthcare coverage to follow workers across jobs—would mitigate career-transition risks and bolster economic resilience.

Employers in emerging industries often struggle to fill vacancies despite high unemployment in declining sectors. The Trump administration must facilitate partnerships between educational institutions, labor unions, and employers to align training programs with industry needs. Apprenticeships and internships in fields like AI and machine learning could provide workers with on-the-job experience.

Micro-credentialing programs—short, specialized training modules—would allow displaced workers to transition into new roles without requiring full degrees, ensuring a faster and more efficient shift to growing industries.

Barriers such as inadequate childcare, eldercare, and inflexible work arrangements disproportionately affect women and low-income families. Subsidizing childcare and eldercare could enable more individuals to pursue retraining and employment. Encouraging remote work and flexible scheduling would expand opportunities for workers in rural areas and those with caregiving responsibilities.

The integration of AI and automation into the workforce represents both a challenge and an opportunity. By investing in retraining programs, economic diversification, and robust social safety nets, the Trump administration could empower workers to navigate this transformative period.

However, given the administration's policy direction, which deprioritizes investments in social safety nets, workforce retraining, and regional economic development, it is unlikely that these comprehensive changes will be pursued. Without a significant shift in priorities, many of the most vulnerable workers will face the full brunt of automation-driven job losses without sufficient support. This stark reality underscores the urgent need for a forward-looking strategy to address these issues head-on. Ironically, this burden will fall most heavily on the administration's strongest source of support—rural communities and blue-collar workers—further deepening the challenges they face.

Robert Cropf is a professor of political science at Saint Louis University.

Some MAGA loyalists have turned on Trump. Why the rest haven’t