Pearl, the author of “ ChatGPT, MD,” teaches at both the Stanford University School of Medicine and the Stanford Graduate School of Business. He is a former CEO of The Permanente Medical Group.

More than two-thirds of U.S. physicians have changed their minds about generative artificial intelligence and now view the technology as beneficial to health care. But as AI grows more powerful and prevalent in medicine, apprehensions remain high among medical professionals.

For the last 18 months, I’ve examined the potential uses and misuses of generative AI in medicine — research that culminated my new book, “ ChatGPT, MD.” Over that time, I’ve seen the fears of clinicians evolve — from worries over AI’s reliability and, consequently, patient safety to a new set of fears: Who will be held liable when something goes wrong?

Technology experts have grown increasingly optimistic that next generations of AI technology will prove reliable and safe for patients, especially under expert human oversight. As evidence, recall that Google’s first medical AI model, Med-PaLM, achieved a mere “ passing score ” (>60 percent) on the U.S. medical licensing exam in late 2022. Five months later, its successor, Med-PaLM 2, scored at an “ expert ” doctor level (85 percent).

Since then, numerous studies have shown that generative AI increasingly outperforms medical professionals in various tasks. These include diagnosis, treatment decisions, data analysis and even empathy.

Despite these advancements, errors in medicine can and will occur, regardless of whether the expertise comes from human clinicians or advanced AI technologies.

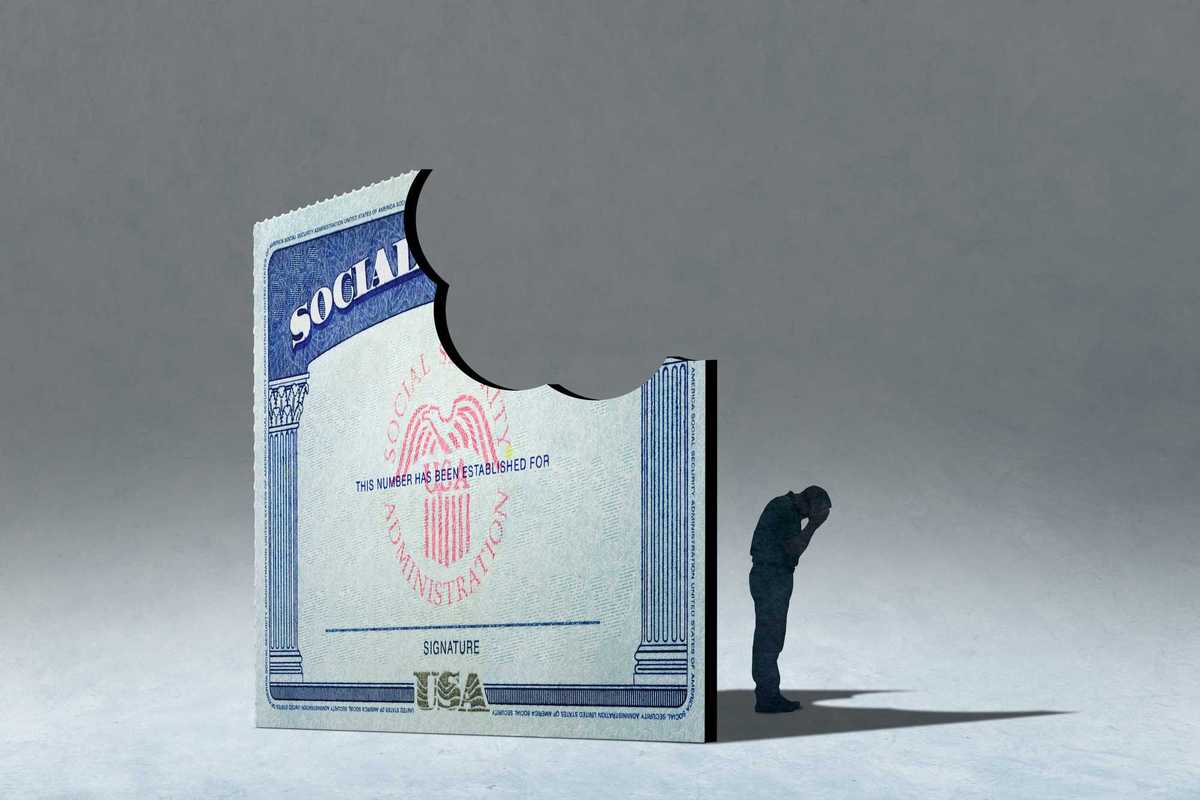

Legal experts anticipate that as AI tools become more integrated into health care, determining liability will come down to whether errors result from AI decisions, human oversight or a combination of both.

For instance, if doctors use a generative AI tool in their offices for diagnosing or treating a patient and something goes wrong, the physician would likely be held liable, especially if it’s deemed that clinical judgment should have overridden the AI’s recommendations.

But the scenarios get more complex when generative AI is used without direct physician oversight. As an example, who is liable when patients rely on generative AI’s medical advice without consulting a doctor? Or what if a clinician encourages a patient to use an at-home AI tool for help with interpreting wearable device data, and the AI’s advice leads to a serious health issue?

In a working paper, legal scholars from the University of Michigan, Penn State and Harvard explored these challenges, noting: “Demonstrating the cause of an injury is already often hard in the medical context, where outcomes are frequently probabilistic rather than deterministic. Adding in AI models that are often non intuitive and sometimes inscrutable will likely make causation even more challenging to demonstrate.”

To get a better handle on the risks posed to clinicians when using AI, I spoke with Michelle Mello, professor of law and health policy at Stanford University and lead author of “ Understanding Liability Risk from Using Health Care Artificial Intelligence Tools.”

Her analysis, based on hundreds of tort cases, suggests that current legal precedents around software liability could be adapted to include AI. However, she points out that direct case law on any type of AI model remains “very sparse.” And when it comes to liability implications of using generative AI, specifically, there’s no public record of such cases being litigated.

So, for medical professionals worried about the risks of implementing AI, Mello offers reassurances mixed with warnings.

“At the end of the day, it has almost always been the case that the physician is on the hook when things go wrong in patient care,” she noted, but added: “As long as physicians are using this to inform a decision with other information and not acting like a robot, deciding purely based on the output, I suspect they’ll have a fairly strong defense against most of the claims that might relate to their use of GPTs.”

To minimize the risk, Mello said AI should be implemented as a supportive tool to enhance (not replace) clinical decisions. She also urges health care professionals to negotiate terms of service with major AI developers like Nvidia, OpenAI and Google, whose current disclaimers deny any liability for medical harm.

While concerns about the use of generative AI in health care are understandable, it’s critical to weigh these fears against the existing flaws in medical practice.

Each year, misdiagnoses lead to 371,000 American deaths while another 424,000 patients suffer permanent disabilities. Meanwhile, more than 250,000 deaths occur due to avoidable medical errors in the United States. Half a million people die annually from poorly managed chronic diseases, leading to preventable heart attacks, strokes, cancers, kidney failures and amputations.

Our nation’s health care professionals don’t have the time available in their daily practice to address the totality of patient needs. The demand for medical care is higher than ever at a time when health insurers — with their restrictive policies and bureaucratic requirements — make it harder than ever to provide excellent care.

It is imperative for policymakers, legal experts and health care professionals to collaborate on a framework that promotes the safe and effective use of AI. As part of their work, they’ll need to address concerns over liability. But they must recognize that the risks of not using generative AI to improve care will far outweigh the dangers posed by the technology itself. Only then can our nation reduce the enormous human toll resulting from our current medical failures.