Levine is the senior elections integrity fellow at the German Marshall Fund's Alliance for Securing Democracy, where he assesses vulnerabilities in electoral infrastructure, administration, and policies. Albanese is associate professor and associate chair for research in the Department of Information Science and Technology at George Mason University.

America's elections are under unprecedented threat. From foreign interference in the 2016 presidential race to the Jan. 6, 2021, insurrection, the integrity of our democratic process has been repeatedly targeted in recent years. Safeguarding it against future attacks requires an often-overlooked resource: Generation Z.

Young Americans have come of age during a time of unparalleled challenges to our democracy. They have witnessed the erosion of trust in our institutions, the rise of polarization and misinformation, and the vulnerability of our electoral systems to both domestic and foreign malicious actors. This tumultuous introduction to the political landscape has left many in Gen Z both disillusioned and eager to be part of the solution.

Yet when we speak with election officials and their partners about engaging the next generation in their work, the conversation centers mostly on poll worker recruitment and awareness-raising. While these are important initiatives, they fail to recognize the full potential of young people as partners in the fight for election security. To truly empower Gen Z to make a difference, we must invest in programs that provide them with hands-on experience and training, engaging youth to ensure a continuous pipeline of knowledgeable individuals joining the space. The Virginia Cyber Navigator Internship Program, a collaboration between the Virginia Department of Elections and six Virginia universities, offers one powerful model.

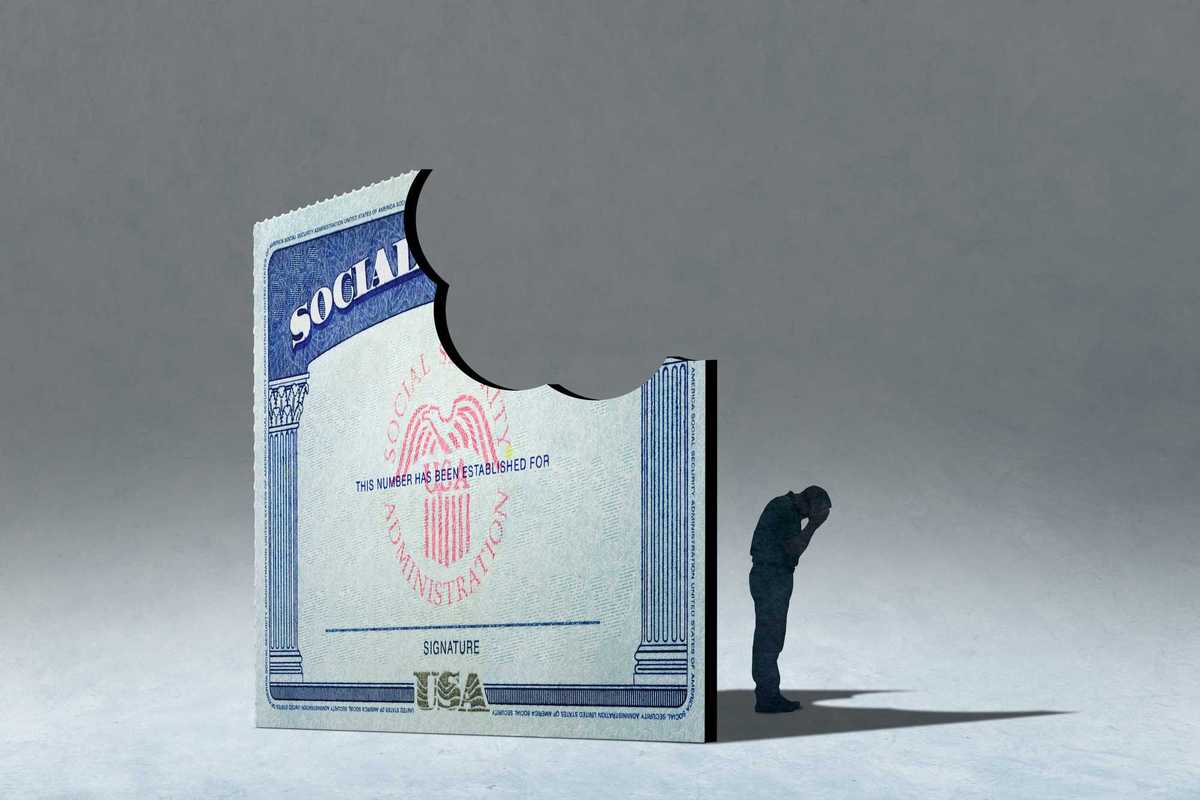

After Russian-affiliated actors targeted voter registration databases and state election websites across the United States in 2016, Virginia lawmakers required that local election offices meet minimum requisite cybersecurity standards. However, many officials still lacked the money, personnel, or knowledge to both meet these standards and upgrade their election infrastructure security.

The VA Cyber Navigator Internship Program, which deploys college students with elections and cybersecurity training to help Virginia’s local election offices improve their security posture, was created to address that gap. The students first take an election security course developed by the universities and the Virginia Department of Elections. The course provides specialized training on the significance of voting and the technical issues associated with securing election processes (the authors of this piece co-teach the election security course offered at George Mason University).

Upon completing this training, students are eligible to apply for the internship program. Selected students work as interns at local election offices throughout Virginia for 10 weeks in the summer. To kick off each year’s summer internship, an election security bootcamp is hosted by the University of Virginia in Charlottesville. This event brings together all interns, faculty from participating universities, elected officials, and experts such as Chris Krebs, the first director of the Cybersecurity and Infrastructure Security Agency. Participating election offices benefit from the expertise of the cybersecurity interns, and the interns gain hands-on experience that connects them with academics and elections officials, an experience that could create a pipeline of talent to mitigate the loss of institutional knowledge that occurs when older, more experienced election officials leave the field.

Deploying technically savvy students with specialized cybersecurity training to assist local election offices provides valuable hands-on experience for students while addressing a critical need for officials. However, recent developments at both the federal and state levels raise concerns about our commitment to prioritizing election security. The paltry $55 million allocated for this purpose in the fiscal 2024 federal budget, along with some states' reluctance to collaborate with CISA, suggests a troubling failure to treat the integrity of our democratic process as the national security imperative that it is.

Investing in the education and engagement of young people cultivates a cadre of informed, committed individuals who understand the gravity of the threats we face. We can build a stronger, more resilient democracy that is better prepared to withstand the challenges posed by those who seek to undermine it. This is not merely a matter of good governance but of national security. Our adversaries, both foreign and domestic, have demonstrated their willingness to exploit any vulnerability in our electoral infrastructure.

Strengthening that infrastructure demands fresh thinking and new perspectives — precisely what Gen Z has to offer. The future of our democracy depends, in part, on our ability to inspire and empower young people to take up this cause as their own.