As a former teacher who worked in a high school when Snapchat was born, I witnessed the birth of sexting and its impact on teens. I recall asking a parent whether he was checking his daughter’s phone for inappropriate messages. His response was, “sometimes you just don’t want to know.” But the federal lawsuit filed last week against Elon Musk's xAI has put a national spotlight on AI-generated deepfakes and the teenage girls they target. Parents and teachers can’t ignore the crisis inside our schools.

AI Companies Built the Tool. The Grok Lawsuit Says They Own the Damage.

Whether the theory of French prosecutors–that Elon Musk deliberately allowed the sexualized image controversy to grow so that it would drive up activity on the platform and boost the company’s valuation–is true or not, when a company makes the decision to build a tool and knows that it can be weaponized but chooses to release it anyway, they are making a risk-based decision believing that they can act without consequence. The Grok lawsuit could make these types of business decisions much more costly.

Attorneys for the plaintiffs in the federal lawsuit filed against xAI this month argue the company "saw a business opportunity: an opportunity to profit off the sexual predation of real people, including children," according to Rolling Stone. The outrage is global. France reported Grok to prosecutors. Malaysia and Indonesia blocked the chatbot. Brazil demanded X to remove deepfake content, and the UK is taking legislative action to criminalize the creation of sexually explicit, nonconsensual content.

The Cat-and-Mouse Problem

"Nudification" apps, tools that strip clothing from photos to generate realistic nude images, have existed in corners of the internet for years with little consequence. But in 2024 and 2025, when major AI platforms including xAI updated their tools in ways that made the capability accessible to almost anyone, what had been a niche problem spread rapidly into schools and communities across the country. Victims, unfortunately, are left with little recourse for a couple reasons.

First, if regulations or legislation starts to infringe on users, they just hop to a different platform. This pattern is not unfamiliar to security practitioners, and the takedown of the Hydra Market in 2022 serves as a classic example. A dark web marketplace is seized and almost overnight a new one emerges in its place. The threat isn’t eliminated. It relocates.

It’s also difficult to trace the true origin of deepfake images and videos. Even though forensic tools exist, they lack the sophistication needed to be truly helpful with deepfake video investigation, according to ethical hacker, FC aka FREAKYCLOWN. “Whilst there may be a digital trail to follow with some deepfakes, attribution is always going to be challenging.”

There’s also a cost factor, which could be prohibitively expensive for many of the teenage victims. Given that there is no guarantee that a forensic investigation will yield the results they need, the best place for victims to start is trying to find where it originated. “Signatures, like which software generated it, which software distributed it, and any possible metadata embedded in the file as well as digital fingerprints for certain platforms will be apparent,” FC said, “But in many cases the person behind the video may have a level of anonymity that could be impossible to unpick.

The Law Is Catching Up, But Not Fast Enough

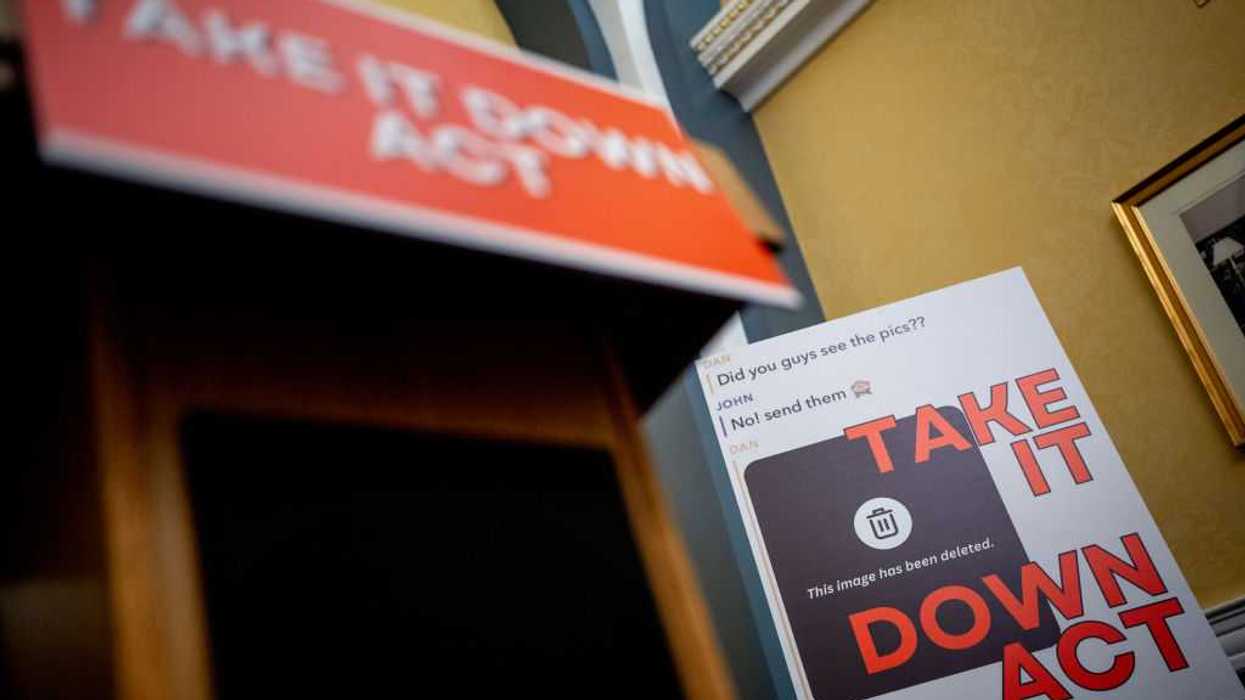

Pennsylvania amended its criminal code to specifically classify AI-generated child sexual abuse material as a third-degree felony. Legislators in multiple other states are pursuing similar measures. The recently signed Take It Down Act is a step in the right direction, but legal experts caution that legislation takes time, and teenagers remain acutely vulnerable while lawmakers work to catch up. Since deepfake technologies became publicly accessible almost a decade ago, states have been passing legislation to protect victims, but it wasn’t until 2025 and early 2026 that the federal Take It Down Act became law. Still, states are looking to hold not only perpetrators but also AI platforms accountable.

What Schools Must Do Now

Schools need clear protocols so that when students report these incidents, administrators escalate them rather than bury them. "Parents, educators, workers, and policymakers are now asking sharper questions about accountability, fairness, and safety. We still have time to shape how these systems enter public life," J.B. Branch, Attorney and Policy Counsel, Public Citizen said.

As a first step, schools can follow the lead of Lynnbrook High School in San Jose, CA where the board of trustees unanimously approved updates to the district’s bullying policy, Board Policy 5131.2, to now include protections for cyberbullying both on and off campus.

A Reason for Cautious Hope

Effective change mandates strong leadership, though. Unfortunately, nearly seven months passed between the known case of Grok being misused and restrictions being put in place. Even then, the restrictions were only for non-paying subscribers and they were paired with Musk denying he had any knowledge of Grok creating sexual underage images.

This lawsuit is an opportunity to establish something the cybersecurity industry has long understood about accountability: if you build the tool that made this possible, you bear responsibility for what it did. AI companies need to implement structural safeguards before deploying tools capable of generating explicit content, not wait until a public outcry or class-action lawsuit forces their hand.

AI companies need to be held accountable. They built the tools and now a lawsuit is asking one to own the damage. The real question is not only will they be held accountable but whether this will also be the moment that changes the calculus permanently.

Kacy Zurkus is a freelance writer whose work has been published in Next Avenue, Dark Reading, and Security Boulevard. She's also Director of Content for RSAC, a cybersecurity company.