Amazon’s loss in court over its attempt to shield the source code behind its Just Walk Out technology is a small win for shoppers, but the bigger story is how employers are quietly collecting biometric data from their own workers.

From factories to Fortune 500 companies, employers are demanding fingerprints, palmprints, retinal scans, facial scans, or even voice prints. These biometric technologies are eroding the boundary between workplace oversight and employee autonomy, often without consent or meaningful regulation.

Everyone has to weigh data privacy decisions. Delete social media accounts for data privacy or be isolated from friends and family? Do a retina scan at the airport or risk being the uptight person who slows down security check?

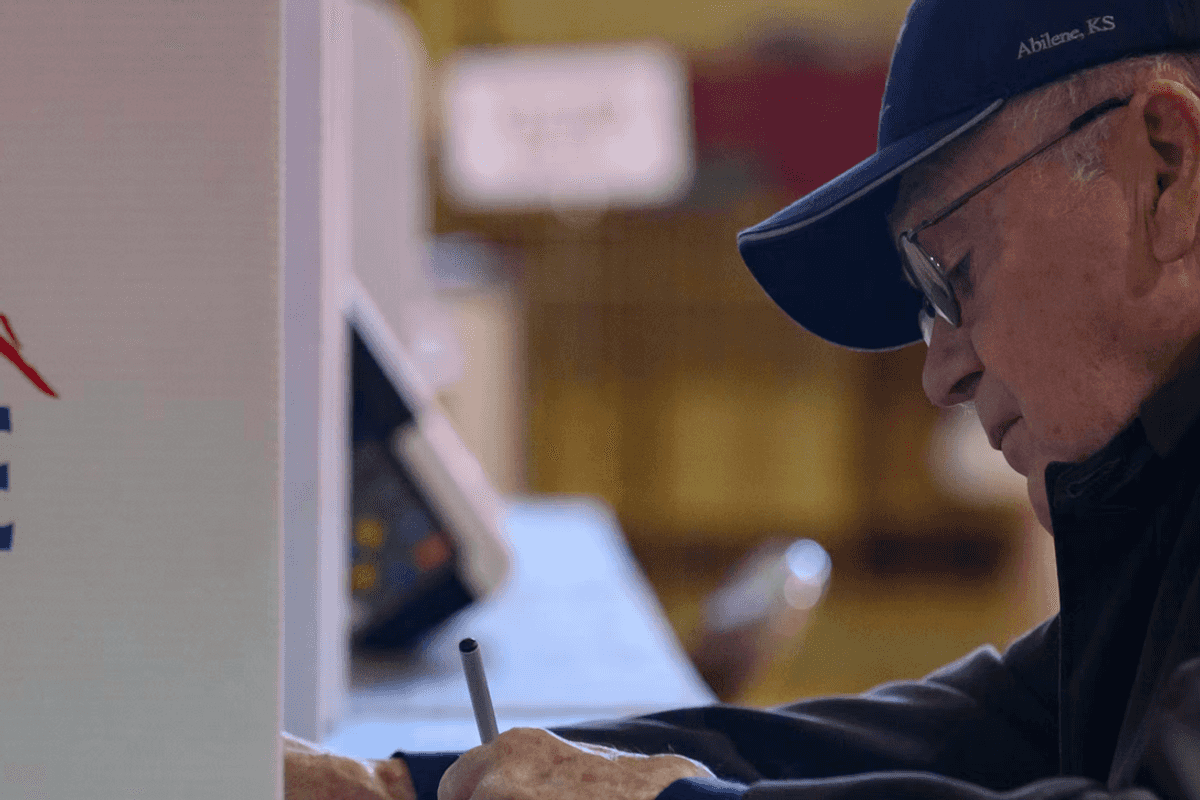

But the questions are becoming way more existential, particularly as they invade the workplace. Workers now have to ask a totally different question: Forfeit data or forfeit income?

Because there’s no federal employment law that gives people the option to consent to biometric collection and use, employers can require employees to undergo scanning systems and other biometric applications.

This legal gap exists because, out of the 20 states with privacy laws that regulate private data collection, some still exclude data collected in employment contexts. So, biometric data protection is largely based on where employees live and work, workers’ rights firm Outten & Golden says.

This patchwork of legal protections is worsened by minimal regulation on corporate data collectors. Right now, companies only provide notices about their data collection and use of personal information: Notice and Choice. In this paradigm, people are shown tons of company privacy terms, but the density and legal jargon of those documents leave people bewildered.

And notices do not wholly cover the frontier of consent. As former Director of the Federal Trade Commission’s Bureau of Consumer Protection Samuel Levine stated back in 2019, “Even if we read the policies and understood them, we can hardly exercise choice given how much we rely on digital services, and the lack of competition in many markets.”

A 2023 Pew study backed up Levine’s statements, showing that 67% of Americans don’t understand and 73% believe they have little to no control over what companies do with their data. Clearly, most Americans are making uninformed decisions about the data they give up just to earn a living.

Now, combine that with no option to consent at all, and workers are being strong-armed into funneling their biometric data into a black box. Faced against the risk of being fired or staying unemployed, it becomes a no-brainer decision. Yet the ease of that decision is not a reflection of how much people actually value their personal data.

In a 2025 IPSOS poll, biometric data ranked fourth (32%) in the types of data believed to be most important to keep private. Only financial, health, and credit card usage data ranked higher.

Given this, employers should allow workers the option to indicate these privacy values through choice. Instead, the only two exceptions to bypass surrendering biometrics are religion or disability. That these are the only “outs” tells us that legislators either aren’t aware of, or don’t care about, the privacy preferences of everyday people.

Employers’ reasons for mandating biometrics include building security, tracking employee time and attendance, machine activation, and authenticating users. Because of this, privacy statutes have carveout defenses tied to security, fraud, and crime prevention.

Ironically, corporations’ interest in security stomps out employees’ right to secure their own data. As noted by the Wyoming Law Review in 2024, current case law ignores how an intrusion or breach of employee biometric data opens people up to limitless invasions of privacy in their personal lives.

This should not be the case. States and the federal government should enact laws that eliminate employment contracts that make biometric data a condition of employment. Given existing dubious consent practices, a new form of choice should become normalized: opt in or opt out.

Faith Wilson is a Public Voices Fellow on Technology in the Public Interest with the OpEd Project.