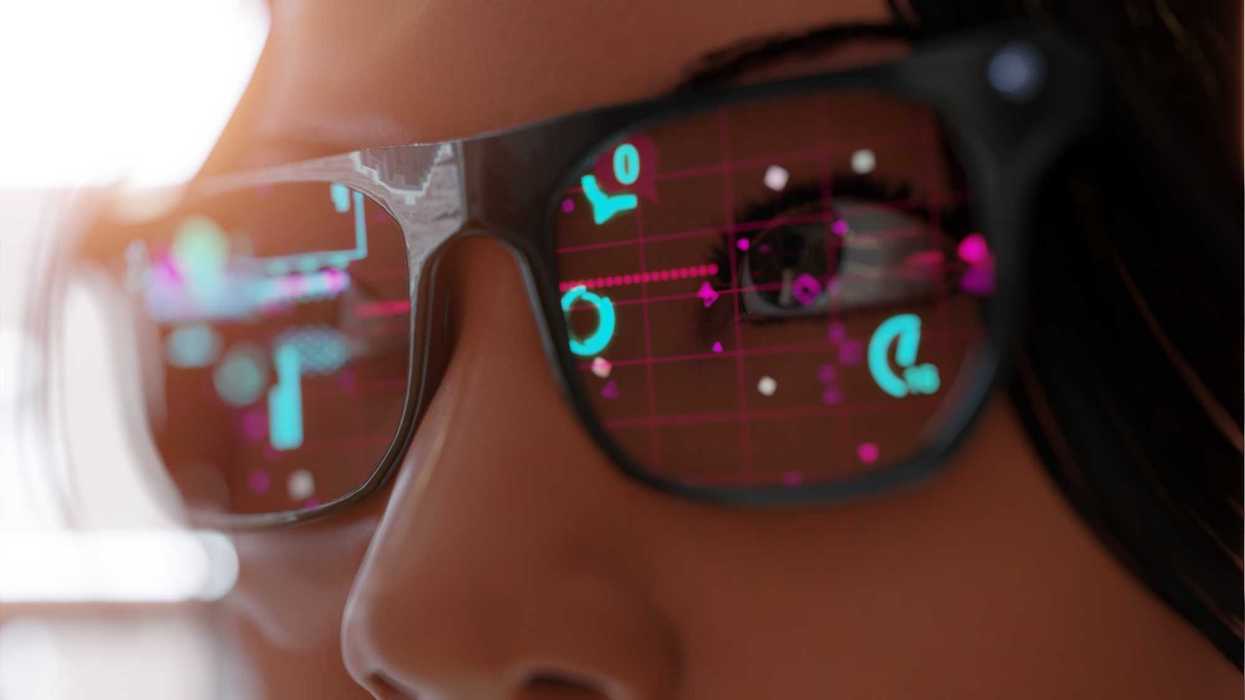

Last month, Apple announced the development of three wearable smart devices, all equipped with built-in cameras. The company has its sights set on 2027 for the release of their new smart glasses, AI pendant, and AirPods with built-in camera, all of which will be AI-functional for users. As the market for wearable products offering smart-recording capabilities expands, so does the risk that comes with how users choose to use the technology.

In Minneapolis in January, Alex Pretti was killed after an encounter with federal agents while filming them with his phone. He was not a suspect in a crime. He was not interfering, but was doing what millions of Americans now instinctively do when they see state power in motion: witnessing.

Pretti’s death is still under investigation, and the legal facts will be contested. But the moral and political question it raises is already clear. In an era when nearly every citizen carries a camera, the act of observing government force has become both easier and more dangerous. Technology has democratized documentation, but it has also transformed the witness into a perceived threat. The result is a troubling pattern: the very tools that were supposed to make power more accountable are increasingly met with intimidation, targeting, and, in the most extreme cases, lethal force.

This is not an isolated dynamic. The bystanders who filmed George Floyd were initially threatened with arrest. Journalists covering the Atlanta “Cop City” protests have been detained and charged under expansive domestic terrorism statutes. In Portland during the 2020 protests, federal officers repeatedly seized and questioned individuals whose primary “offense” was recording. Abroad, reporters in Gaza and the West Bank have been shot while clearly marked as press. The specifics differ, but the logic is consistent. When cameras proliferate, the state begins to treat the act of seeing as subversive.

Pretti’s case brings that logic home. Federal agents operating in an American city confronted a civilian whose only apparent act was observation. Whether through panic, misjudgment, or institutional culture, the presence of a recording device was treated not as a protected exercise of constitutional liberty, but as a provocation. This is the quiet inversion taking place. The First Amendment once stood as a shield for those who spoke and those who watched. Today, in moments of tension, it is increasingly treated as an obstacle.

Technology has changed the architecture of accountability. In the twentieth century, oversight flowed primarily through institutions: courts, legislatures, and professional media. In the twenty-first century, it flows through networks. A single video can expose misconduct, contradict official statements, and mobilize public scrutiny within hours. For communities that have long experienced disproportionate policing and surveillance, the smartphone has become a tool of self-defense in the civic sense. It is how power is checked when formal channels fail or move too slowly.

But this shift has also created a perverse incentive. When documentation becomes ubiquitous, those who wield force know that every action may be dissected and judged. In that environment, the witness is no longer neutral but becomes a liability. The danger is not only the tragic loss of life in cases like Pretti’s, but the chilling effect that follows. If observing police activity can get you detained, pepper-sprayed, or worse, rational citizens will think twice before lifting their phones. The public square grows quieter, and misconduct becomes easier to hide. This is how a democracy drifts, not quite through the abolition of rights on paper, but through the normalization of fear around exercising them.

If the act of witnessing is now central to how constitutional accountability functions, then the law must evolve to protect it explicitly.

First, Congress should enact a clear federal “Right to Witness” statute. Courts have recognized a First Amendment right to record police, but doctrine alone is insufficient when agents on the ground operate under stress and ambiguity. A statute should make plain that recording or observing law enforcement, including federal agents, is presumptively lawful, and that detention, seizure of devices, or use of force solely on that basis is prohibited. Retaliation against witnesses should carry enhanced civil and criminal penalties, and evidence obtained after unlawful interference with recording should be subject to automatic suppression, much as statements taken in violation of Miranda are.

Second, qualified immunity should not shield officers who use force against individuals engaged in clearly lawful observation. The doctrine was designed to protect reasonable mistakes in fast-moving situations, not to insulate retaliation against constitutional oversight. When the conduct at issue is the suppression of a core First Amendment activity, the legal system should err on the side of accountability.

Third, states and cities should assert their role as constitutional backstops when federal operations occur within their borders. “Sanctuary for witnesses” laws could limit cooperation with federal agencies in cases where force is used against civilians engaged in protected recording, and require automatic review by state attorneys general whenever such incidents occur. Federalism should not mean abdication when fundamental liberties are at stake.

Fourth, the legal system should treat bystander footage with the same seriousness as official body-camera recordings. Preservation requirements, chain-of-custody rules, and penalties for destruction or suppression should apply equally. The public’s camera is now part of the evidentiary infrastructure of justice. It deserves institutional protection.

Finally, there should be a clear civil cause of action for obstruction of lawful civic observation. When individuals are targeted, injured, or killed because they were documenting state conduct, they and their families should not have to rely solely on discretionary prosecutions or protracted constitutional litigation. The law should recognize interference with witnessing itself as a distinct and grave harm.

Some state laws already recognize parts of what a federal ‘Right to Witness’ statute would codify. For example, New York’s Civil Rights Law § 79-p explicitly protects the right to document police activity and allows those whose rights are violated to seek damages, a civil remedy that goes beyond mere constitutional claim-making. Several states, such as Colorado, Hawai‘i, and Illinois, also independently protect the right to record police in public in their statutes or constitutions. Yet even in these jurisdictions, officers sometimes detain or seize recording devices, and courts are left to sort out the violations later. This patchwork shows that while recording rights are increasingly recognized, they lack the clear, uniform statutory safeguards against interference, force, and impunity that a federal law would provide.

Beyond statutes and doctrines, there is a cultural shift that must occur. Filming police is often framed as antagonistic, as if the camera were an insult rather than a safeguard. In reality, it is an expression of the same civic impulse that underlies jury service, public trials, and a free press. It is how ordinary people participate in the maintenance of lawful government.

Alex Pretti did not set out to be a symbol. He was a citizen with a phone, recording in a community that promises freedom of speech and freedom of the press. That those freedoms can now place someone in mortal danger should trouble anyone who cares about constitutional democracy. The question his death forces is not only what happened in one encounter, but what kind of political order we are becoming when seeing is treated as a threat.

David M. Hatami is an Offensive Security Project Manager and a Public Voices Fellow on Technology in the Public Interest with The OpEd Project. He previously managed cybersecurity and penetration testing operations at Amazon Web Services and was a fellow with Youth for Privacy.

Editor's Note: This story was updated on 3/30 to accurately reflect the timeliness of past events.