Facebook is testing limits on shared external links, which would become a paid feature through their Meta Verified program, which costs $14.99 per month.

This change solidifies that verification badges are now meaningless signifiers. Yet it wasn’t always so; the verified internet was built to support participation and trust. Beginning with Twitter’s verification program launched in 2009, a checkmark next to a username indicated that an account had been verified to represent a notable person or official account for a business. We could believe that an elected official or a brand name was who they said they were online. When Twitter Blue, and later X Premium, began to support paid blue checkmarks in November of 2022, the visual identification of verification became deceptive. Think Fake Eli Lilly accounts posting about free insulin and impersonation accounts for Elon Musk himself.

This week’s move by Meta echoes changes at Twitter/X, despite the significant evidence that it leaves information quality and user experience in a worse place than before. Despite what Facebook says, all this tells anyone is that you paid.

Meta argues that this program increases trust in creators’ content and, as a spokesperson shared with TechCrunch, “This is a limited test to understand whether the ability to publish an increased volume of posts with links adds additional value for Meta Verified subscribers.” The idea that this could benefit subscribers assumes that monetization is Facebook's primary value and discounts the interests of other users. More importantly, this test of power and control undermines an informed electorate.

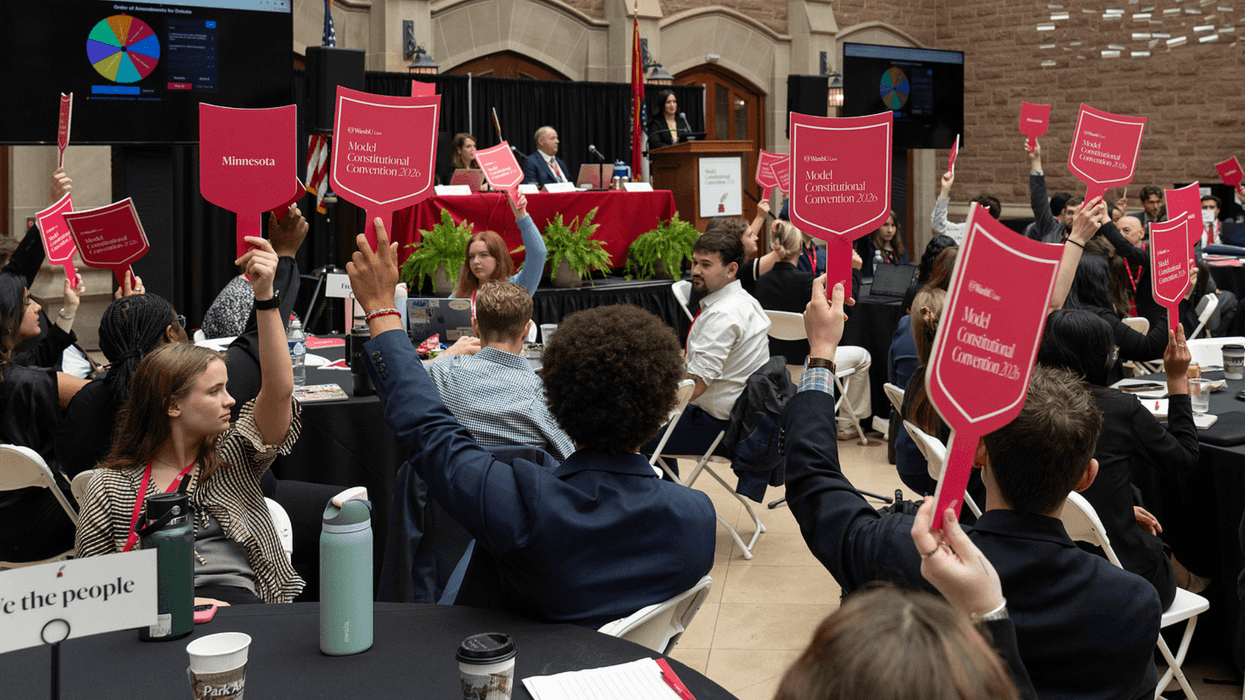

A majority of US adults get news from social media, according to 2025 PEW Research data. Facebook, in particular, has the largest share among those who regularly look for news on social media. The importance of social media in the modern information ecosystem cannot be discounted.

Misinformation, which is rampant on social media, is a known problem that experts argue can best be addressed by individuals, in part, by verifying information through trusted sources and triangulation with multiple sources. An intentional decision by one of the most powerful platforms of the era to break some of the meager tools available to users to address misinformation is dangerous and should concern us all.

To be sure, there are people who will pay for this, even though they had it for free before December 16, 2025. Yet, it is also true that this will increase user confusion, not satisfaction with content, and it is very likely to exacerbate the misinformation problems that are already overwhelming on Facebook. If this is in fact a test, rather than a soft launch for a major change that has already been determined, Meta should not proceed.

As users, we need to be aware of this further degradation of our information environments and make sure those in our lives who might be vulnerable to the implications of this change are also aware. As citizens, we need to look beyond social media and entertainment for civic information, and to recognize that clicks and traffic that support these changes work against our interests as individuals and as a society.

As we reach a moment in which many are thinking about resolutions and what they might wish to do differently in the upcoming year, consider a social media cleanse, reconnecting more directly with the people we care about, and exploring different information sources.

Madelyn Sanfilippo is an Assistant Professor in the School of Information Sciences, a Public Voices Fellow, and a member of the 24-25 OpEd Alumni Project sponsored by the University of Illinois, and an Editor for Cambridge Studies on Governing Knowledge Commons.