While public outrage flares when AI tools like Elon Musk’s Grok generate sexualized images of adults on X—often without consent—schools have been dealing with this harm for years. For school-aged children, AI-generated “nudification” is not a future threat or an abstract tech concern; it is already shaping their daily lives.

Last month, that reality became impossible to ignore in Lafourche Parish, Louisiana. A father sued the school district after several middle school boys circulated AI-generated pornographic images of eight female classmates, including his 13-year-old daughter. When the girl confronted one of the boys and punched him on a school bus, she was expelled. The boy who helped create and spread the images faced no formal consequences.

The case ignited debate over internet safety, deepfake pornography, and school discipline. But it also exposed a deeper truth we are reluctant to confront: decisions made by powerful tech leaders are reshaping childhood faster than schools, parents, or laws can respond—and schools are being left to manage the fallout without the tools they need.

Recent survey data confirms this is not an isolated incident. Researchers found that AI “nudification” is increasingly common in schools, used to harass, humiliate, and exert power over peers. What adults may still perceive as shocking misconduct has, for many students, become disturbingly normalized.

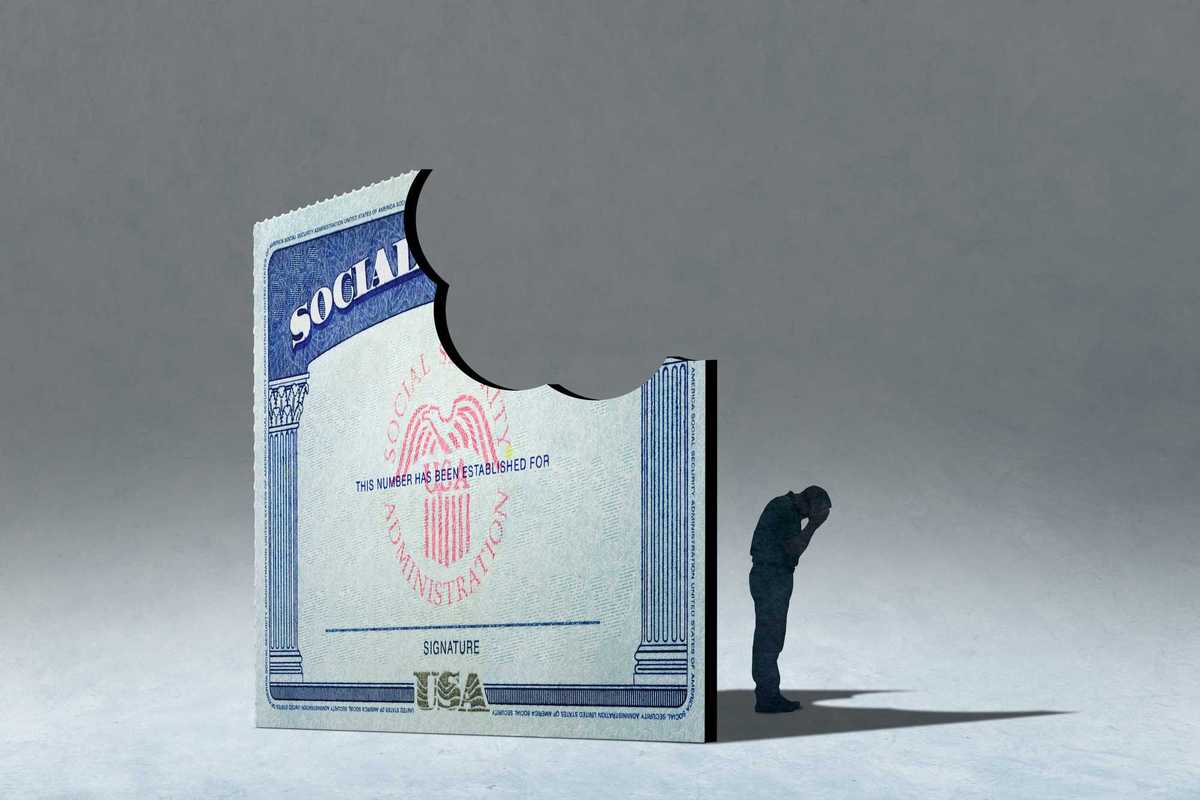

In nearly all 50 states and Washington, D.C., creating and distributing child sexual abuse material is a crime. AI-generated deepfakes, however, present a unique challenge. These images are easy to create, can be shared widely in seconds, and often disappear from platforms just as quickly. Even when perpetrators are identified, the speed, volume, and anonymity of digital sharing make enforcement extraordinarily difficult.

Expecting the legal system to track and prosecute every child and teenager contributing to this epidemic is neither realistic nor effective. If we focus only on punishment after harm occurs, we will always be too late. The goal must be prevention.

Research shows that 31 percent of young people are familiar with deepfake nudes, and one in eight knows someone who has been victimized by them. Girls account for 99 percent of the victims. One in 17 youth and young adults has been directly targeted by AI-generated sexual images—roughly one student in every middle school classroom in the United States. This is not a fringe issue or a moral panic. It is a widespread form of sexual harassment enabled by technology that outpaces our safeguards.

Students need clear guidance to navigate a digital world where a single harmless photo can be transformed into a weapon—sometimes without malicious intent, but with devastating consequences. Yet only 28 states and the District of Columbia require sex education, and just 12 include instruction on consent. This gap has created ideal conditions for the deepfake crisis to flourish.

Without education on bodily autonomy, digital boundaries, consent, and meaningful safeguards from tech companies, young people are left unequipped to recognize the harm in creating and sharing explicit AI images. They are even less prepared to respond when they or their peers become targets.

As a mother, I resist the urge to say simply that parents need to talk to their kids. Parents are essential, but many lack the technical knowledge, consistent access, or awareness needed to explain how these images are created, how quickly they spread, and the profound psychological harm they cause. That is where schools must step in.

As a former middle school teacher, I have sat across from parents explaining the seriousness of emerging online trends long before they reached Facebook groups, GroupMe chats, or parent blogs. Schools are often the first places where this harm appears—and they are uniquely positioned to respond.

Schools can and should provide structured, age-appropriate education that reaches all students, ensures consistent messaging, and creates space for honest discussion. Lessons should include:

- How popular apps and tools generate AI images

- The legal ramifications and potential criminal liability

- The deep psychological and emotional harm inflicted on victims

- Clear school- or district-wide reporting protocols

- The rights of victims and available supports

Educators already manage cyberbullying, hunger, school violence, and adolescent mental health. Some may ask whether this is one burden too many. But integrating education about AI-generated pornography is not an added responsibility—it is a necessary evolution of student safety in a digital age.

Unlike many victims, both the woman targeted on X and the 13-year-old girl in Lafourche Parish reported their abuse. But for every report, how many students suffer in silence—ashamed, afraid, or unsure whether adults will take them seriously?

While platforms like X attempt to normalize or minimize the harm of deepfake nudification, educators must push back against the idea that this behavior is accessible, acceptable, or consequence-free. That message does not stay online. It reaches classrooms, school buses, and lunch tables. When perpetrators face little accountability and victims are punished for reacting, the lesson students learn is devastatingly clear.

If tech leaders will not fully account for the damage their products enable, schools must act—not through harsher punishment, but through education. Teaching AI literacy, consent, and respect is our strongest defense against a problem that is only growing. Prevention, not discipline, is how we protect children—and how we ensure no more students have to fight back just to be heard.

Julienne Louis-Anderson is a former educator, curriculum writer, and educational equity advocate. She is also a Public Voices Fellow of The OpEd Project in Partnership with the National Black Child Development Institute