Generative artificial intelligence has already reshaped industries such as computer programming, retail, and manufacturing. In medicine, however, fears of clinical error have slowed adoption.

At present, two-thirds of doctors report using GenAI tools in practice, though half insist that stronger safeguards are needed.

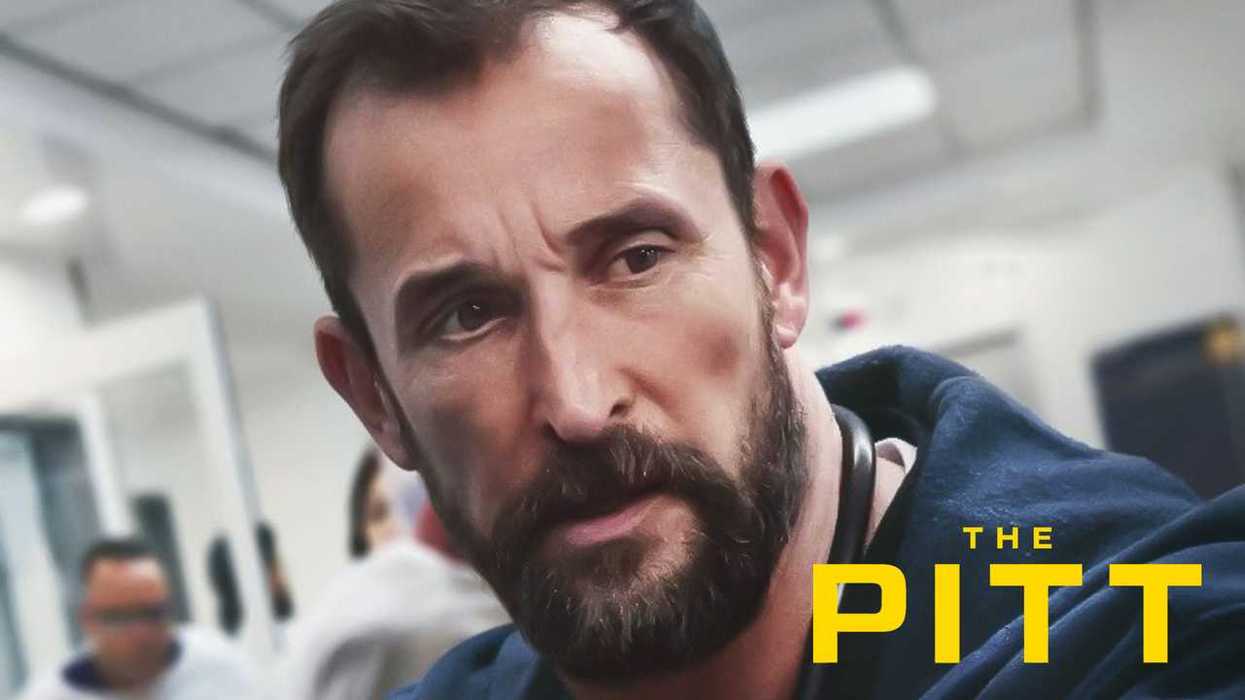

This split — between physicians eager to adopt AI and those wary of its risks — took center stage in a recent episode of HBO’s The Pitt, set in a fictional Pittsburgh emergency department.

In the episode (titled “8:00 A.M.”), protagonist Dr. Michael “Robby” Rabinovitch is a veteran emergency physician wary of new technology. Dr. Baran Al-Hashimi, his temporary replacement, is more enthusiastic.

She introduces an AI documentation tool called Ambient Listening to streamline charting. The application saves time and impresses residents. Then it misidentifies a medication, an error that could have had serious consequences had another physician not caught it.

I’ve written extensively about generative AI’s impact on medicine and found the episode riveting. While the show accurately captured the tension between AI’s advocates and skeptics in medicine, what it left out was even more significant than what it portrayed.

What The Pitt gets right about AI in medicine

The Pitt correctly depicts that many clinicians remain fearful of this powerful, new technology.

In addition to fears that AI will make a mistake that injures a patient, doctors also worry that it will grow too powerful, eventually overriding clinical judgment and diminishing the physician’s role. Both anxieties are reasonable, and together they’ve slowed the adoption of generative AI.

In most hospitals, generative AI has been limited to administrative work: listening to patient encounters, drafting notes for the electronic medical record, summarizing charts, and assisting with billing and coding tasks. Despite the hype, only the most advanced health systems are deploying AI to help doctors and patients diagnose and develop treatment plans.

In that sense, the show gets the central dynamic right. Generative AI in medicine is neither universally embraced as perfect nor completely dismissed as useless. Instead, it has been adopted cautiously in areas unlikely to affect clinical outcomes.

What The Pitt gets wrong about AI in medicine

In one scene, Dr. Al-Hashimi assures her colleagues that the AI system is “98% accurate.” But without context, that figure is deeply misleading.

Accuracy depends on what’s being measured. If Al-Hashimi meant that AI avoids 98% of minor documentation errors, then that figure grossly overstates its performance. But if that statistic means AI makes dangerous errors 2% of the time, there’s no evidence to support that assertion either.

The episode’s biggest error is overstating human performance compared to technological outcomes. When Al-Hashimi tells her colleagues the technology is “excellent, but not perfect” and therefore must always be overseen by a human, she implies clinicians rarely make mistakes. That assumption is grossly inaccurate.

Misdiagnoses contribute to nearly 400,000 American deaths annually, with another 250,000 fatalities linked to preventable medical errors. Studies show that at least half of electronic health records contain at least one mistake, and many are perpetuated when busy physicians copy and paste prior patient notes.

The key question isn’t whether generative AI is flawless. It’s whether it outperforms clinicians working alone. Even a modest drop in misdiagnoses and preventable harm would save tens of thousands of lives each year.

What The Pitt leaves out entirely

The episode focuses on current tensions with GenAI. What it omits is where this technology is headed.

Already, in head-to-head diagnostic comparisons, generative AI performs at levels comparable to human clinicians.

And as tools improve rapidly, the debate will soon shift. The question will no longer be whether GenAI belongs in medicine, but how to deploy it most effectively.

Its greatest impact is unlikely to be in emergency rooms and hospitals. It will be in helping to manage tens of millions of Americans living with chronic disease.

CDC data show that better control of hypertension and diabetes could prevent up to half of heart attacks, strokes, and kidney failure. Today, patients with these illnesses are seen only a few times a year, which helps explain why hypertension is effectively controlled in only half of Americans. Diabetes control rates are even lower.

GenAI can analyze data from home blood pressure cuffs, glucose monitors, and wearables, enabling earlier medication adjustments and timelier treatments. Instead of waiting for a patient’s next office appointment or crisis, clinicians will be able to intervene when warning signs appear. More continuous monitoring has allowed some U.S. health systems to achieve control rates near 90%.

Superior quality at lower costs is the desired future that The Pitt does not explore. The central debate going forward will not be doctors versus machines. It will be how doctors and technology partner for the benefit of patients. Ultimately, the combination of dedicated clinicians, empowered patients, and generative AI will yield clinical outcomes exponentially better than any one of the three alone.

Robert Pearl, the author of “ChatGPT, MD,” teaches at both the Stanford University School of Medicine and the Stanford Graduate School of Business. He is a former CEO of The Permanente Medical Group.

The Unity Forum Discussion w/ Carlos Curbelo. After registering, you will receive a confirmation email about joining the webinar.

The Unity Forum Discussion w/ Carlos Curbelo. After registering, you will receive a confirmation email about joining the webinar.

Some MAGA loyalists have turned on Trump. Why the rest haven’t